Is Gate-Level Simulation Still Required Nowadays??

A colleague recently asked me: Has anything changed? Do design teams tape-out nowadays without GLS (Gate-Level Simulation)? And if so, does their silicon actually work?

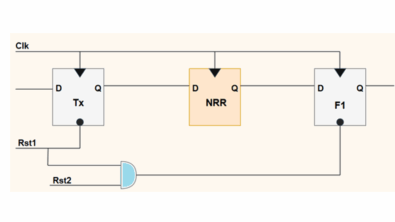

In his day (and mine), teams prepared in 3 phases: hierarchical gate-level netlist to weed out X-propagation issues, then full chip-level gate simulation (unit delay) to come out of reset and exercise all I/Os, and weed out any other X’s, then finally a run with SDF back-annotation on the clocktree-inserted final netlist.

After much discussion about the actual value of GLS and the desirability of eliminating the pain of having to do it from our design flows, my firm conclusion:

Yes! Gate-level simulation is still required, at subsystem level and full chip level.

Its usage has been minimized over the years – firstly by adding LEC (Logical Equivalence Checking) and STA (Static Timing Analysis) to the RTL-to-GDSII design flow in the 90s, and secondly by employing static analysis of common failure modes that were traditionally caught during GLS – x-prop, clock-domain-crossing errors, power management errors, ATPG and BIST functionality, using tools like Questa® AutoCheck, in the last decade.

So there should not be any setup/hold or CDC issues remaining by this stage. However, there are a number of reasons why I would always retain GLS:

- Financial prudence. You tape out to foundry at your own risk, and GLS is the closest representation you can get to the printed design that you can do final due diligence on before you write that check. Are you willing to risk millions by not doing GLS?

- It is the last resort to find any packaging issues that may be masked by use of inaccurate behavioral models higher up the flow, or erroneous STA due to bad false path or multi-cycle path definitions. Also, simple packaging errors due to inverted enable signals can remain undetected by bad models.

- Ensure that the actual bringup sequence of your first silicon when it hits the production tester after fabrication. Teams have found bugs that would have caused the sequence of first power-up, scan-test, and then blowing some configuration and security fuses on the tester, to completely brick the device, had they not run a final accurate bring-up test, with all Design-For-Verification modes turned off.

- In block-level verification, maybe you are doing a datapath compilation flow for your DSP core which flips pipeline stages around, so normal LEC tools are challenged. How can you be sure?

- The final stages of processing can cause unexpected transformations of your design that may or may not be caught by LEC and STA, e.g. during scan chain insertion, or clocktree insertion, or power island retention/translation cell insertion. You should not have any new setup/hold problems if the extraction and STA does its job, but what if there are gross errors affecting clock enables, or tool errors, or data processing errors. First silicon with stuck clocks is no fun. Again, why take the risk? Just one simulation, of the bare metal design, coming up from power-on, wiggling all pads at least once, exercising all test modes at least once, is all that is required.

- When you have design deltas done at the physical netlist level: e.g. last minute ECOs (Engineering Change Orders), metal layer fixes, spare gate hookup, you can’t go back to an RTL representation to validate those. Gates are all you have.

- You may need to simulate the production test vectors and burn-in test vectors for your first silicon, across process corners. Your foundry may insist on this.

- Finally, you need to sleep at night while your chip is in the fab!

There are still misconceptions:

- There is no need to repeat lots of RTL regression tests in gatelevel. Don’t do that. It takes an age to run those tests, so identify a tiny percentage of your regression suite that needs to rerun on GLS, to make it count.

- Don’t wait until tapeout week before doing GLS – prepare for it very early in your flow by doing the 3 preparation steps mentioned above as soon as practical, so that all X-pessimism issues are sorted out well before crunch time.

- The biggest misconception of all: ”designs today are too big to simulate.”. Avoid that kind of scaremongering. Buy a faster computer with more memory. Spend the right amount of money to offset the risk you are about to undertake when you print a 20nm mask set.

Yes, it is possible to tape out silicon that works without GLS. But no, you should not consider taking that risk. And no, there is no justification for viewing GLS as “old school” and just hoping it will go away.

Now, the above is just one opinion, and reflects recent design/verification work I have done with major semiconductor companies. I anticipate that large designs will be harder and harder to simulate and that we may need to find solutions for gate-level signoff using an emulator. I also found some interesting recent papers, resources, and opinion – I don’t necessarily agree with all the content but it makes for interesting reading:

- A. Chandran, R. Vincent Gatelevel Simulations – Continuing Value in Functional Simulation. DVCon India proceedings, Sep 25, 2014

- G. Jalan Gate Level Simulations : A Necessary Evil. Siddhakarana Blog, June 20, 2011

- R. Goering Why Gate-Level Verification Is Increasing. Cadence community blogs, Jan 16, 2013

- A. Khandelwal, A. Gaur, D. Mahajan Gate level simulations: verification flow and challenges. EDN Network, March 5, 2014

I’d be interested to know what your company does differently nowadays. Do you sleep at night?

If you are attending DVCon next week, check out some of Mentor’s many presentations and events as described by Harry Foster, and please come and find me in the Mentor Graphics booth (801), I would be happy to hear about your challenges in Design/Verification/UVM and especially Debug.

Thanks for reading,

Gordon

Comments

Leave a Reply

You must be logged in to post a comment.

Great Article!