The Many Flavors of Equivalence Checking: Part 6, FPGA-focused Equivalency Checking Flows

With last year’s acquisition of OneSpin, we now have a valuable addition to the solutions I described in The Many Flavors of Equivalence Checking series of posts: FPGA-focused “EC” flows.

But before I dive into the details, I grant that given the maturity of FPGA implementation tools, and the inherent reconfigurability of FPGA devices themselves, it is very tempting to think that you can forgo some of the final implementation verification steps that are required for ASICs. However, while the path from Register Transfer Level (RTL) code to final netlist is well travelled, there is a small but non-zero risk some of the following bad things could happen:

- The synthesis tool gets over aggressive with optimizations (or wasn’t configured properly); or it adds too many buffers and other such logic

- Design styles (or clever “hacks”) that work in simulation at the RTL level get synthesized into unworkable gate-level logic

- Malicious Trojan logic surreptitiously inserted via a supply chain attack

Plus: many of today’s FPGAs are now the size of the largest ASICs from just a few years ago. When you add-in the myriad of available, large-scale IP options, even medium-scale FPGAs have truly earned the right to be called SoCs. The point is: as FPGAs become bigger and house more critical system functionality, the age-old practice of debugging FPGA issues in a lab with a logic analyzer is becoming an unaffordable sink of man-hours and budget. Any added verification that can reduce lab time (and/or gate level simulation) saves money and schedule.

The bottom line: automated, exhaustive verification of the functional equivalence of the RTL code to synthesized netlists, and the final placed & routed FPGA design implementation, is mandatory.

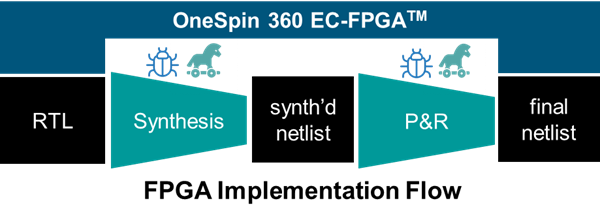

Enter the OneSpin EC-FPGA flow:

Even if your RTL is functionally perfect, synthesis and P&R over-constraining and optimizations can inadvertently slip-in errors that are very hard to detect in the lab. Starting on the left-hand-side of Figure 1, consider the following issues that can appear in the initial RTL to synthesize netlist phase:

- Coincident Read Discrepancies

- Wrong FSM Re-Encoding

- Undriven or Unconnected Wires

- Incorrectly Optimized Pipelines

And on the right-hand-side of Figure 1, common implementation issues include:

- Incorrect block RAM parameter settings

- Clock gating for low power issues

- P&R connection Issues

- Unspecified added logic (from a team member trying to hack something into shape; or a Trojan)

The above lists aren’t academic speculation: over the years EC-FPGA analysis has encountered all these issues on real-world FPGA projects.

So how does this flow work in-practice?

RTL Synthesis Validation

Similar to the evolution of ASIC synthesis validation, FPGA users commonly compare the “golden” Verilog or VHDL behavior vs. the synthesized gate-level circuitry by re-running the test bench created for the HDL DUT on the gate-level implementation; or if the target circuit board is already populated with the target FPGA, just download the bit stream to the device and start running it!

Unfortunately, as they say in the mathematics world, successful passage of all the tests – either a simulate testbench or lab smoke tests — is only a “necessary but not sufficient” criterion as such testing is just not exhaustive.

Consequently, between the common problems listed above, and the success of formal-based ASIC equivalence checking, it was natural to leverage the same basic class of static, mathematical analysis techniques – i.e. automated formal-based equivalence checking – to exhaustively compare the behavior of the RTL and gate-level FPGA design descriptions for all inputs and all time, and do this significantly faster than brute-force comparison of simulation results.

Supporting Optimizations

One of the “Catch-22s” of FPGA design is the imperative of taking advantage of any-and-all optimizations that your synthesis tool and the device structure itself can give you; but without inadvertently crating the problems listed above. Rephrasing: you DO need to enable aggressive optimizations, yet simultaneously reduce the risk of the errors listed above from appearing in the production device.

This is where the FPGA-specific focus of EC-FPGA is apparent: the tool supports both combinational and sequential optimizations that are common in today’s FPGA synthesis process, such as:

- Constants register removal

- Register duplication/merging

- Pipelining Retiming

- FSM re-encoding

- Distributed block RAM/ROM

- RAM implementation of SRLs

The “trick” is follow-along with the DUT transformations, and break-up the flow up into two stages: first, verify the RTL to synthesized, unimplemented netlist; then verify the netlist the final placed-and-routed netlist.

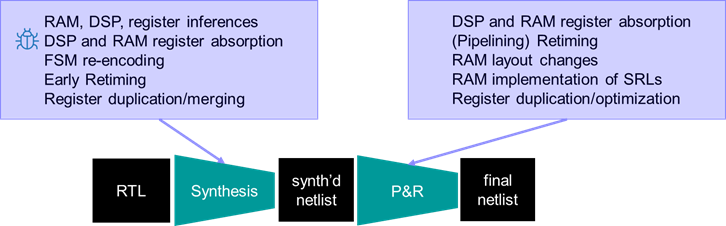

Figure 2: Breaking up the analysis into several phases helps quickly debug issues unique to each phase

Common transformations for synthesis are:

- RAM, DSP, register inferences

- DSP and RAM register absorption

- FSM re-encoding

- Early Retiming

- Register duplication/merging

And for place and route there are often additional transformations such as:

- RAM layout changes

- RAM implementation of Shift Register LUTs

- Register duplication/optimization

Again, this two-stage approach is better than comparing the RTL to the final netlist because it would be much more difficult to pinpoint the defect, debug the root cause, and understand which step inserted the defect. Indeed, for when you need to be ultra-aggressive about optimizing the design as much as possible, some of our FPGA partners recommend re-running the EC-FPGA tool in SIX cycles: RTL to elaborated netlist, elaborated to synthesized netlist, synthesized to floor planned netlist, floor plan to specific placement netlist, placed to placed&routed netlist, then routed to re-timed final netlist.

Summary

Bugs introduced by FPGA synthesis and Place and Route – or malicious Trojan logic by bad actors –

can be very hard to detect – not to mention damaging – if they escape into the final device. Hence, as FPGAs become bigger, the same exhaustive, formal-based RTL-to-netlist equivalence verification flow used for years in the ASIC world is now essential for FPGA projects. Fortunately, FPGA implementation verification using OneSpin EC-FPGA can accelerate your design workflow, reduce lab testing, enable aggressive optimizations, while dramatically reducing risk.

Joe Hupcey III

Siemens EDA