Part 4: The 2016 Wilson Research Group Functional Verification Study

FPGA Verification Effectiveness Trends

This blog is a continuation of a series of blogs related to the 2016 Wilson Research Group Functional Verification Study (click here). In my previous blog (click here), I focused on the amount of effort spent in FPGA verification. We have seen in previous blogs that an increasing amount of effort is being applied to FPGA functional verification. In this blog I focus on the effectiveness of verification in terms of FPGA project schedule and bug escapes.

FPGA Schedules

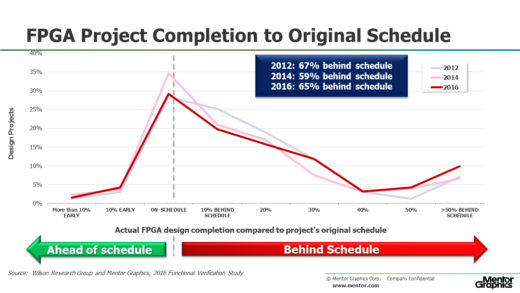

Figure 1 presents the design completion time compared to the project’s original schedule. What was a surprise in the 2014 findings is that we saw an improvement in the number of FPGA projects meeting schedule—compared to 2012 and 2016. This could have been an anomaly or sampling error associated with this question in 2014, as I discussed when describing confidence intervals in the prologue. However, we saw similar results when looking only at ASIC/IC designs in 2016 (which will be published in a future blog). At any rate, it does raise an interesting question that might be worth investigating.

Figure 1. FPGA design completion time compared to the project’s original schedule

FPGA Lab Iterations

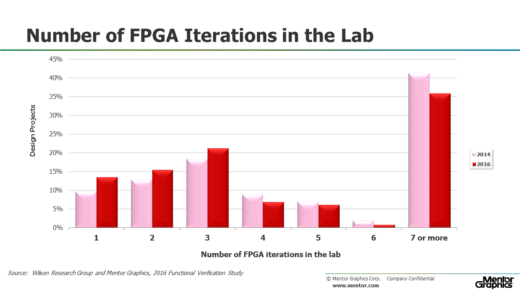

ASIC/IC projects track the number of required spins that occur prior to market production. In fact, this can be a useful metric for determining the overall verification effectiveness of an ASIC/IC project. Unfortunately, we lack such a metric for FPGA projects. For the 2014 study, we decided to ask the question related to the average number of lab iterations required before the design went into production, and asked the same question again in 2016. The results are shown in Figure 2.

Figure 2. Number of FPGA iterations in the lab

FPGA Non-Trivial Bug Escapes to Production

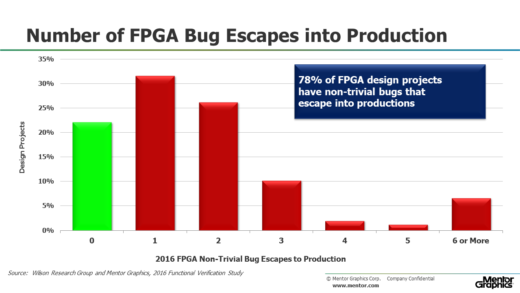

I have never been convinced that lab iterations is analogous to ASIC/IC respin as a verification effectiveness metric. So for our 2016 study we decided to also ask the question concerning the number of non-trivial bugs that escape into production and are found in the field. The results were surprising and are presented in Figure 3. Only 22 percent of today’s FPGA design projects are able to produce designs without a non-trivial bug escaping into the final product. For many market segments (such as safety critical designs) the cost of upgrading the FPGA in the field can be huge since this requires a complete revalidation of the system.

Figure 3. Number of FPGA iterations in the lab (no trend data available)

FPGA Bug classification

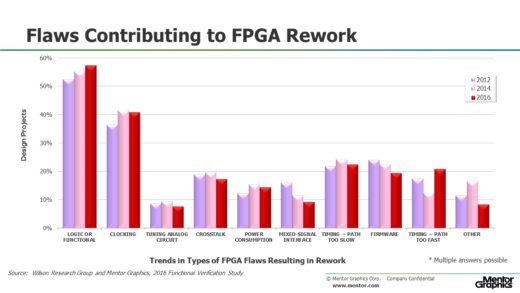

For the 2016 study, we asked the FPGA project participants to identify the type of flaws that were contributing to rework. In Figure 4, I show the two leading causes of rework, which are logical and functional bugs, as well as clocking bugs. The data seems to suggest that these issues are growing. Perhaps due to the design of larger and more complex FPGAs.

Figure 4. Types of Flaws Resulting in FPGA Rework

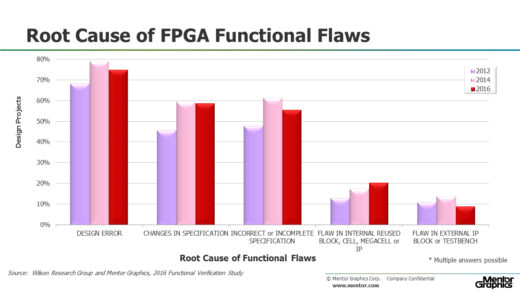

In Figure 5, I show trends in terms of main contributing factors leading to logic and functional flaws—and you can see that design errors are the main cause of functional flaws. But note that a significant amount of flaws are related to some aspect of the specification—such as changes in the specification—or incorrect or incomplete specifications. Problems associated with the specification process are a common theme I often hear when visiting FPGA customers.

Figure 5. Root cause of FPGA functional flaws

In my next blog (click here), I plan on presenting the findings from our study for FPGA verification technology adoption trends.

Quick links to the 2016 Wilson Research Group Study results

- Prologue: The 2016 Wilson Research Group Functional Verification Study

- Understanding and Minimizing Study Bias (2016 Study)

- Part 1 – FPGA Design Trends

- Part 2 – FPGA Verification Effort Trends

- Part 3 – FPGA Verification Effort Trends (Continued)

- Part 4 – FPGA Verification Effectiveness Trends

- Part 5 – FPGA Verification Technology Adoption Trends

- Part 6 – FPGA Verification Language and Library Adoption Trends

- Part 7 – ASIC/IC Design Trends

- Part 8 – ASIC/IC Resource Trends

- Part 9 – ASIC/IC Verification Technology Adoption Trends

- Part 10 – ASIC/IC Language and Library Adoption Trends

- Part 11 – ASIC/IC Power Management Trends

- Part 12 – ASIC/IC Verification Results Trends

- Conclusion: The 2016 Wilson Research Group Functional Verification Study