Siemens, NVIDIA and Siemens Energy work together to redefine turbomachinery development with the speed of GPU-accelerated CFD

Gas turbines will be indispensable in the world’s pursuit of decarbonization while simultaneously supporting a growing demand for flexible, dispatchable electricity. The constant threat of climate change looms and with no action, man-made ecological and environmental detriment is a certainty. This comes at a time when a combination of factors such as the data center boom, growing adoption of electric vehicles, and electrification of building heat puts more intermittent loading on the grid than ever before. At face value these two statements are in direct conflict, however, Siemens Energy – powered by Siemens Xcelerator and NVIDIA solutions – is working to redefine how turbomachinery is designed using the speed of GPU-accelerated computational fluid dynamics (CFD) simulation. Accelerated computing enables magnitude decreases in design timelines, from weeks to days and from days to hours.

By modifying traditional gas turbines to burn hydrogen as a fuel, through co-firing or complete displacement of natural gas such as in the case of the SGT-9000HL, gas turbines can provide a reliable low-carbon or carbon-free power generation solution. The need for these modifications is urgent and GPU-accelerated CFD, using Simcenter STAR-CCM+ powered by NVIDIA accelerated computing and software, plays a major role in this upscaling. As such, Siemens Energy has invested heavily in digital engineering to help solve their most difficult fluid dynamics challenges. In tandem, they are investing in NVIDIA accelerated computing to power their novel digital engineering needs. Siemens Energy engineers and leadership expect multiples of return on investment to their business with this “all in” on digitization strategy.

Dan Mekker, a Senior Advisor at Siemens Energy, stresses the importance of NVIDIA accelerated computing workflows to their competitive advantage:

GPUs enable Knowledge Based Engineering, where engineers complete engineering activities faster, leverage knowhow and experiences of the entire enterprise, explore product solutions not practical with classic engineering

The challenging physics of turbomachinery

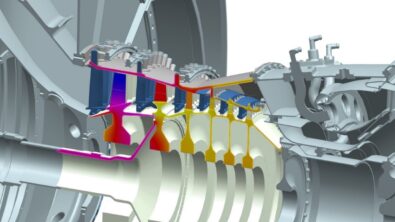

Simcenter STAR-CCM+ is an enabling technology for Siemens Energy engineers at all stations throughout the gas turbine. Despite their differences, the compressor, combustor and turbine are united in that they are governed by three-dimensional, unsteady, compressible, turbulent fluid flows and benefit from NVIDIA GPU-accelerated CFD multiphysics simulation The compressor is challenged with turbulent shear layers, tip leakage vortices, and shock-boundary layer interactions, all while multi-stage interaction and stall looms due to high-pressure gain. In the combustor, fuel and air mix and react violently to generate heat, influenced by how fuel atomizes, air/fuel mixtures swirl, and flame stability. Extreme thermal conditions here can exceed 3,500˚F, where heat transfer to the structural assemblies results in the need for exquisite metals just to keep from melting. In the turbine, these same high-pressure and temperature combustion gasses, whom have no other place to go, impact the blades and vanes. Energy extraction from these gasses is optimal if the blades remain undamaged, but repetitive thermal stress and a rotation speed typically in excess of 3000 rpm makes that challenging. As such, complex cooling effusion channels are integrated, forming a protective film of relatively cold air over the surface of the blade. Extreme thermal gradients are then a byproduct.

Developing turbomachinery is traditionally an experimental heavy process – meaning expensive – and due to the complexity of each individual station, a unique team of experts is generally responsible for a single station, communicating with the other teams at the transition point between stations. This is not ideal as station-to-station interaction is not captured and complexity compounds as stage-to-stage interactions occur, so engineers iterate through design, test, analyze cycles on real hardware to validate performance, discover unforeseen issues, and calibrate experimental data before finalizing a production design. This is not optimal for time to market, competitive advantage, or an accelerating need for carbon-neutral solutions.

Digital solutions have traditionally mirrored this siloed approach, however computing advances are allowing engineers to combine stations and investigate station-to-station interaction prior to experimentation, reducing design iteration cycles. With sophisticated and robust automatic meshing capabilities, a complete set of advanced physics models, and powerful, parallelized post‐processing tools, Simcenter STAR-CCM+ has a cascade effect leading to more efficient fluid flow investigation, component strength, and durability calculations during concurrent multi-disciplinary analysis. And while a single analysis is useful, exploring the design space is critical as well.

Dan Mekker on the importance of Siemens digital tools workflows:

Siemens Energy works collaboratively in joint development of advanced technologies, delivering enabling capabilities in timeframes desired to provide innovative customer products. Simcenter STAR-CCM+ workflows are crucial given the necessity of higher order modeling of the physics

Despite their differences in function, the compressor, combustor and turbine are united as beneficiaries of the flourishing efficiency, speed and accuracy of the Digital Twin. As noted before, some of the most complex flows in all of applied aerodynamics occur in these processes and the so-called “shift-left” in high-fidelity computational modeling is positively impacting the cost and time effectiveness of design cycles. GPU-accelerated CFD enables the shift-left approach by providing the computational speed necessary for high-fidelity modeling early in the design process.

The Siemens Digital Twin, which goes beyond industry leading CFD GPU performance, comprises a litany of digital solutions including templated automation, design space exploration with embedded AI/ML solutions, end-to-end CAE-CAD-CAM chains, whole engine modeling, and an excellent customer support structure to boot.

The importance of large eddy simulation for turbomachinery

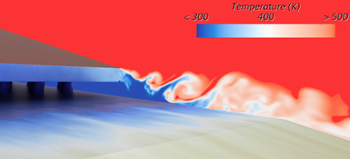

Large‐Eddy Simulation (LES) CFD approaches are known to be difficult to execute in practice because they directly resolve energetic, turbulent eddies in a flow field on very small scales. This means the engineer must test the extent of their computational resources, which are oftentimes difficult to procure in the first place, with expensive computational mesh sizes and time‐step lengths to capture the flow phenomenon critical to increasing design confidence prior to cutting metal for prototyping. LES represents a step increase in the fidelity staircase as compared to Reynolds-averaged Navier-Stokes (RANS) and does not miss or oversimplify important unsteady turbulent structures critical to turbomachinery design, especially near walls where time-dependent physics like periodic vortex shedding or unsteady shock boundary layer interactions are present. GPU-accelerated CFD makes LES practical by dramatically reducing computational time while maintaining accuracy, addressing the computational resource challenges that have historically limited its adoption.

One such example of the need to leverage LES thermal simulations is the design of trailing edges in gas turbine blades. These blades must be knifes-edge thin, withstand incredibly high temperatures and rely on advanced engineering to ensure the integrity of the blade is not compromised. Simulating this is possible with the inclusion of the Segregated Energy model and Ideal Gas Equation of State in Simcenter STAR-CCM+. At the trailing edge, hot gas from the external gas path of the blade interacts with the relatively cooler internal blade flow which often exits through a series of slots at the trailing edge. There is then mixing and impingement on the trailing edge of the blade. Capturing the correct mixing and impingement of these two flows typically requires higher fidelity than a standard RANS calculation. In a case like this, GPU-accelerated CFD provides a clear performance upgrade over traditional CPU computing. Taking a typical number of nodes, in this instance 3 Xeon Gold nodes (120 CPU cores), this problem would take close to a week to derive meaningful results. GPU architecture means that realistically design iterations can be turned around in less than a day.

Capturing detailed turbine blade heat transfer with LES

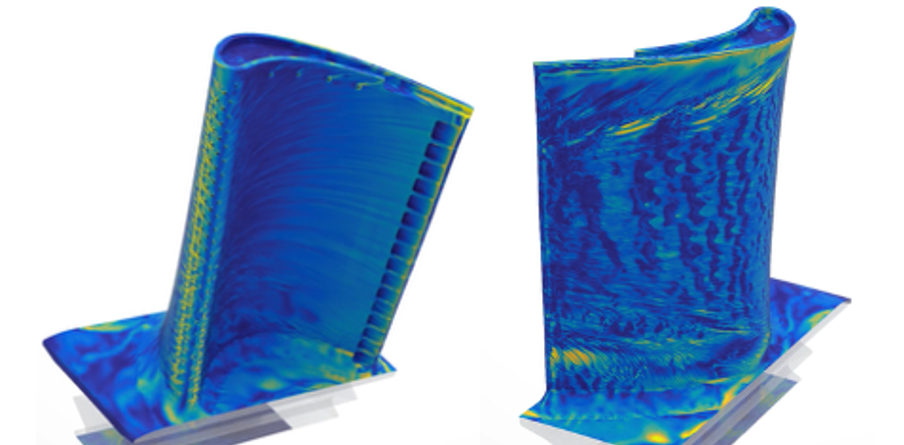

Siemens Energy uses Siemens Digital Industries Software and NVIDIA solutions to redeem the benefits of GPU-accelerated CFD for high-fidelity simulation on a rotor blade. The benefits of LES and its application to a common axial gas turbine blade with internal flow paths are discussed in the coming section. GPU-accelerated CFD simulations to investigate the effect of a thermal load being applied to the blade with conjugate heat transfer (CHT) film cooling physics included are shown.

The geometry used for the studies immediately shows how Simcenter STAR-CCM+ is a leader in geometry complexity handling, with an internal design comprising a series of internal serpentine cooling channels, rib turbulators, pin fins, cooling slots, top cap channels, and film cooling holes. Capturing the detailed turbulent flow physics first starts with a sufficient level of realism in the CAD model

Similarly, the computational mesh is critical to resolving flow qualities at this scale. Simcenter STAR-CCM+ is known for it’s built-in polyhedral mesher, which performs particularly well when swirling flows arise, like in this case. Nominal LES meshes can balloon quickly and oftentimes this mesh strategy allows for fewer cell counts than other mesh topologies. In the case of the CHT simulation shown below, highly detailed flows were captured on a 69M element mesh, however, it is typical for turbine blade meshes to exceed 100M elements. Similarly, combustion cases frequently exceed a magnitude larger than that. These mesh sizes and resultant computational requirements stress the importance of simulation speed.

Dan Mekker commented on how Siemens Energy is applying this on a real-world scale:

Higher order fidelity modeling of physics for turbine flows is beneficial for us since many of the design challenges here are beyond what you can capture with classic RANS and URANS CFD.

NVIDIA accelerated computing was critical in the success of these simulations, and will continue to be for Siemens Energy engineering workflows that require speed. The initial simulations were performed on non-state-of-the-art NVIDIA CPU clusters and produced excellent results, with comparatively long run times. In a mixed precision transient simulation on 120 CPUs the engineer was seeking to run the simulation long enough to see ten flow throughs – essentially the time it takes for a fluid particle to transverse the length of the cord ten times. The same turbine blade case was then run using GPU-accelerated CFD on a Blackwell B200 machine where just 2 cards were checked out. The same case, simulated for 10 flow-throughs, was completed, producing results identical to those found in the CPU runs. It could then be observed, when comparing the two cases, that there was a 77% reduction in compute time from CPU to GPU. This speedup allowed Siemens Energy engineers to produce results at a faster clip, resulting in both more designs investigated and longer transients that greatly influence design choices related to the thermal properties of the blade.

It should be mentioned that the visualizations of these results were rendered directly in the new Simcenter STAR-CCM+ photorealistic Studio Scene using GPUs, enabling the engineering team to share design flow qualities more freely and concisely with the decision makers in their organization. More designs, longer timeframes, and better analysis & visualization techniques supported the engineers and decision-makers enabling them to better understand the behavior of the blades before any selections were made to begin expensive physical prototyping.

Conclusion – Accelerated computing transforms turbine blade engineering

The transformation to NVIDIA GPU-accelerated CFD processing for turbine blade LES workflows represents a leap forward in modern computational fluid dynamics. By leveraging the massively parallel architecture, high memory bandwidth of GPUs, and accelerated computing software, researchers and engineers can achieve impactful reductions in runtimes – as shown in this document speed increases of 77% – without sacrificing accuracy. Historical constraints in computational power are very quickly lessening, allowing for a new approach to digital engineering, where heavy down selection of the design space was once prominent, we can now explore more designs in the same amount of time, before cutting metal for prototyping. This enhanced accelerated computing performance not only makes previously infeasible large-scale simulations practical but also broadens the accessibility of LES, enhancing where RANS was once considered the only option. As GPU technology continues to evolve, its role in enabling increasingly complex and accurate turbulence-resolving simulations will be essential to pushing the boundaries of high-fidelity computational fluid dynamics.

Want to learn more?

If you’d like to deepen your expertise in GPU-accelerated CFD, turbomachinery design approaches, or the Siemens portfolio of engineering solutions, check out the following content:

- Siemens Energy uses LES simulation to investigate transient combustion effects

- More with LES on GPUs – 3 high-fidelity CFD simulations that now run while you sleep

- When cold-to-hot transformation plays a role in turbomachinery

- Turbomachinery CFD simulation: art in motion

- Gas turbine simulations and butterflies: chasing beauty

- Weaving digital threads with machine learning for turbomachines

*Trusted by Engineers. Reviewed by Experts. Rated on G2.com*

Choosing the right engineering simulation software is not easy. Share your experience with Simcenter STAR-CCM+ to help your peers make informed decisions.

(Approved reviews are eligible for a $25 Amazon gift card.)