Correct by Construction and Other Myths

By Joseph Davis, Mentor Graphics

Is “correct by construction” a myth?

At a certain level, we can view modern chip design methodology as based on the successive-approximation method—abstract the pieces with models, put the models together to get approximately what you want, push the design down to the next level of abstraction to see if it still works with a little more detail, then repeat until you have sufficient confidence that you can take the result to silicon. There are many, many layers of these models and abstractions, from simple transistor behavior all the way up to functional models of entire blocks. Many of these models and abstractions, and the software that embodies them, come from years of hard work by many people around the world. The objective of all that work was to find the perfect model that is accurate enough and fast enough to provide satisfactory results in a reasonable amount of time. This is all good engineering, and even some good science, too.

Of course, once the models are there, the engineer in all of us wants to automate the processes. If you can check your assumptions and iterate quickly, then why can’t you ensure that the result is perfect every time by just automatically drilling down through the levels of abstraction to ensure convergence? It shouldn’t be that hard; see, I can draw it on the board! Correct by construction—the perfect genesis of an idea made into reality. It is the siren song that lures us, tempts us, woos us, and ultimately…fails us. It fails us because, outside of toy problems, the world is just a messy place that’s a lot more complicated than we want to admit.

For instance, let’s start at the bottom of the food chain with something simple—constructing a manufacturable digital layout from a netlist. This should be simple, right? Layout is just the abstraction of the materials we put on the wafer, in nice, simple, rectilinear shapes. These shapes function as connections and make transistors. We have a pretty good model for the performance of a transistor over a wide range of conditions, and good models for resistance, capacitance, and inductance of the connections based on their configurations.

We’ve got good models, and a powerful computer, so it should be easy enough to generate the perfect layout to satisfy our needs…but this is where things start to get messy. The netlist by itself isn’t really enough to get the desired result. The netlist is just a description of part of the solution to the design challenge. The inputs, outputs, and environmental conditions must also be defined, as well as the boundary conditions under which the solution (the netlist) was derived. Oh, and we have to be able to make it in physical materials, so the layout has to follow all of the design rules of the manufacturing process—not just locally, but globally.

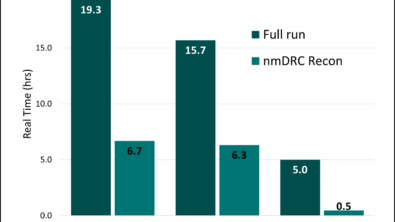

For engineers, with EDA tools and flexible human brains at their disposal, this is all in a day’s work, but work, nonetheless. For the mythical “correct by construction” tool, it’s a bit harder than that. The design tool has models built into it to assist the designer and provide one level of approximation. To be sufficiently fast for design work, these models have to be simple, so they can run very quickly. Simplification gives up detail, which means that, once the design work is done, designers must go down to the signoff models, which most accurately represent the process, then go back to the design level and adjust it based on the detailed level results. And then repeat. Often, many times.

This is where the other myth comes in—that all qualified sign-off tools are equal. Surely, all tools qualified by the foundry provide the same results, right? In theory, yes. In practice, not so much. The jobs we set for these tools are so complex that it is impossible to test all of the possible input scenarios during qualification. Even though the foundries invest a lot of time and effort to qualify the models and tools provided by EDA vendors, this qualification simply can’t be exhaustive, especially for a new process. This is why the foundries themselves rely on specific tools during process development, generally tools that undergo the most extensive testing by the EDA supplier. Therefore, “qualified” means a tool passes the foundry’s qualification suite, which is the best that that it can do, but not that the results will be equal to those from all other such tools across all possible inputs.

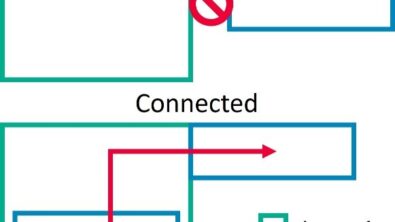

To get the results that most closely match those achieved by the foundry, especially for a newer technology, you really want to be using the same sign-off tools for your detailed modeling that the foundry uses to develop its qualification suite. The reality of today is that these sign-off tools come from different EDA vendors. Therefore, to get the qualification/sign-off tool for every model, you will have to use tools from different vendors and get them to work together. As every physical designer can tell you, it is hard enough to get tools from the same company to work together, let alone get tools from different vendors to work together smoothly. It can be done, and there are some robust interfaces between a variety of design and verification tools, but it is rare that the interface is smooth and productive.

This is one of the most practical impediments to “correct by construction”—simply that it requires multiple tools from multiple vendors to work together in a way that is very difficult in today’s environment. The real impact of the lack of tight integration between EDA tools from different vendors goes far beyond killing a practical “correct by construction” approach—it affects the productivity of every chip designed today. Look at your design flows. How much “glue” did you have to put in to stitch the tools together? How much time could you have saved if the sign-off tool you needed was directly integrated with your design tool? Of course, the real problem isn’t that EDA companies can’t integrate 3rd party tools, but that they have no reason to do so. “Integration” doesn’t show up on a benchmark list, just as “usability” doesn’t. These attributes are hard to measure, and therefore don’t show up as evaluation criteria. However, they do have real value, and a real impact on the non-recurring engineering (NRE) costs for every chip. If design companies want to maximize productivity, features such as these must become part of the technical criteria used to inform their business decisions.

Author

Joseph Davis is Director of Product Marketing for Calibre interfaces at Mentor Graphics.

Liked this article? Then try this –

This article was originally published on www.eetimes.com