Going With The Flow – Overview

I had the opportunity to visit some of our customers in Europe recently to talk about their verification flow challenges for current and future projects. Some very interesting conversations where I learned a lot about what has changed in recent years about the complexities of the designs, and where the focus is today on verification economics and productivity. Both chip and systems design teams are still driven by a common theme – time to market – and so having a productive verification flow and predictable closure is ever more important.

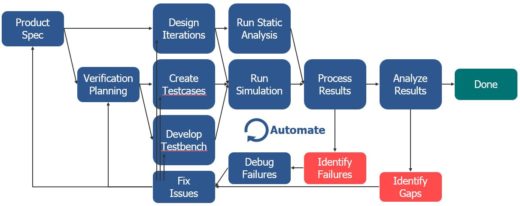

Our discussions always gravitated to the topic of the verification flow – how the various point tools and database formats integrate to form a repeatable pipeline in regression, a flow that is designed from the outset to meet the needs of that project, and adaptable to add components, aspects, new data along the way as the project evolves from concept to architecture to RTL to gates to integration to tapeout. All of the orthogonal concerns: power, area, performance, reusability, manufacturability, are a given, but the flow needs to take them into account from the outset in order to ensure productivity. Less time spent trying to fight against the tools, more time spent running a well oiled machine and doing the real work of designing, testing, debugging, assessing.

At Mentor we enable verification flows which are standards-based but interoperable; as fast as possible but flexible; easy to configure and set up but comprehensive in their support for automated regression, continuous integration, parallel execution – all the best software build practices of today. We have some industry-leading point tools, but equally importantly we ensure that they work together seamlessly as part of an automated flow. Our common denominators such as the UCIS/UCDB standard for coverage data exchange, our common front end for simulation, static, formal and other tools, and our common debug solutions, help in the ease of use and productivity stakes alike. How well integrated and automated is your verification flow?

Tune into my recent webinar recording on Verification Academy for more discussion on the verification flow, best practices, various iteration paths around the flow, and looking in particular at speed of execution. We’ll also be talking more about this topic at DAC in a few weeks time. Productivity is the goal, and so the flow, and the tools within it, have to be built for speed, and tuned up, so that you can get the most performance out of yourself and your team. How productive is your verification flow?

My next few blog entries will continue this topic, looking at particular aspects of the flow in more detail, starting with the inner regression loop and how to optimize it for maximum throughput. In the meantime, I would be interested to know where the bottlenecks and productivity gaps are in your own flow – feedback welcome.