When medical disinfection robots succeed: It’s more autonomy and more intelligence

System Simulation for medical devices companies

Medical companies focus on specific devices such as ventilators, breathing bags, or electric syringes to optimize their sizing or adapt their initial designs to face new challenges. So it brings new scenarios (like several patients using the same system, …) while the System Simulation approach helps find interesting results very quickly. Its efficient modeling and simulation process allows to execute runs in a few seconds, no more.

Nowadays, the tendency in the Medical Devices industry follows the general trend for more autonomous systems. Medical disinfection robots are deployed to sterilize hospitals (waiting rooms, patient rooms), car parks, shopping malls, and other public places with the strong advantage that we don’t expose humans anymore to the virus when using such robots.

So Siemens Simcenter Engineering and Consulting services started designing new dedicated Autonomous Mobile Robots (AMRs). They combine an autonomous electrical-driven platform and a disinfecting system on the top. This comes typically with some liquid micro-spray nozzles injecting on surfaces or ultraviolet-C (UVC) lights to purify the air. With the goal to destroy all the pathogenic micro-organisms in suspension. Therefore, the disinfecting robots sanitize these rooms, and the humans can use them afterward for their regular activities.

Medical disinfection robots are autonomous mobile robots (AMRs)

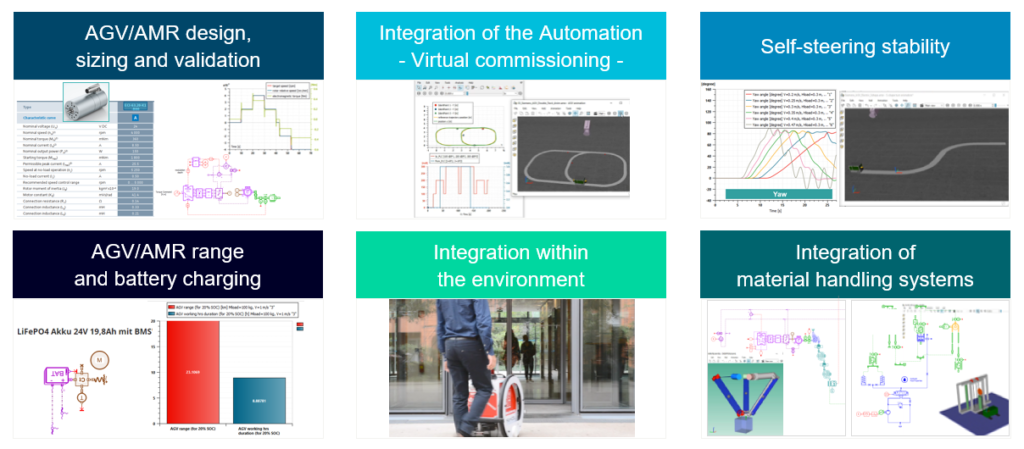

System Simulation can help shrink the development time with a reduced number of expensive physical prototypes and test campaigns. One key factor in the product development cycle for medical disinfection robots is autonomous functioning and navigating in a new environment. It uses a combination of machine vision (cameras, lidars, short-range radars, etc.), sensor fusion, control logic, and vehicle dynamics. So that the robots can operate easily in various situations and floor surfaces.

When physical interactions with the infected space are too dangerous for humans, the autonomous robot can do simple and repetitive tasks. Many solutions exist in robotics where the robot motion is directed by remote control. However, the autonomous disinfection robot can drive on its own to accomplish its functions. It’s based on machine vision (from several onboard sensors) and a typical sense-think-act algorithm. Which provides the right commands to the actuators without human presence, then without risks of virus contamination.

In this paper we would like to give some insights into how the simulation-driven approach can support the development of autonomous disinfecting robots. Starting from the system sizing, to the sensor’s design and to the final control algorithms verification and validation.

A simulation framework for autonomous systems

The simulation framework combines different Siemens tools already successfully deployed for various autonomous applications in multiple industries. Such as self-driving cars in the automotive industry, drones and UAM (urban air mobility) in aeronautics, autonomous agricultural vehicles in heavy equipment, or even battle tanks in hostile environments in defense. Consequently, we applied the same simulation architecture to the emerging medical robot applications that need to face slightly different requirements.

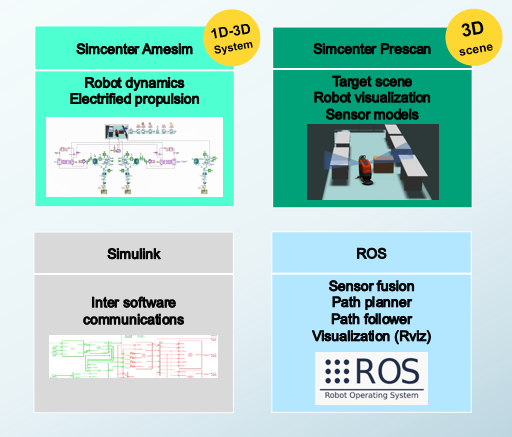

This framework couples several software tools performing time-domain simulations. An alternative workflow would consist in the direct connection of Simcenter Amesim and Simcenter Prescan with FMI (Functional Mockup Unit), which is now available in both tools. While the work presented here was created a few months ago, and we used:

- Simcenter Amesim® representing the vehicle dynamics and the electric-driven propulsion.

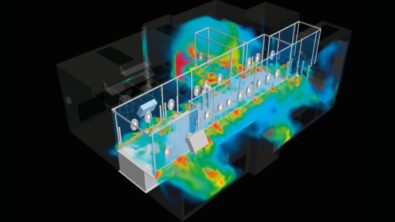

- Simcenter Prescan® representing the hospital environment and modelling the sensors that detect the presence of objects in the environment (cameras, lidars, short range radars etc.)

- Simulink® connecting both Simcenter Amesim and Simcenter Prescan.

In addition, we used ROS (Robot Operating System) for the sensor fusion and the control algorithms providing the actuators commands to the Simcenter Amesim vehicle model.

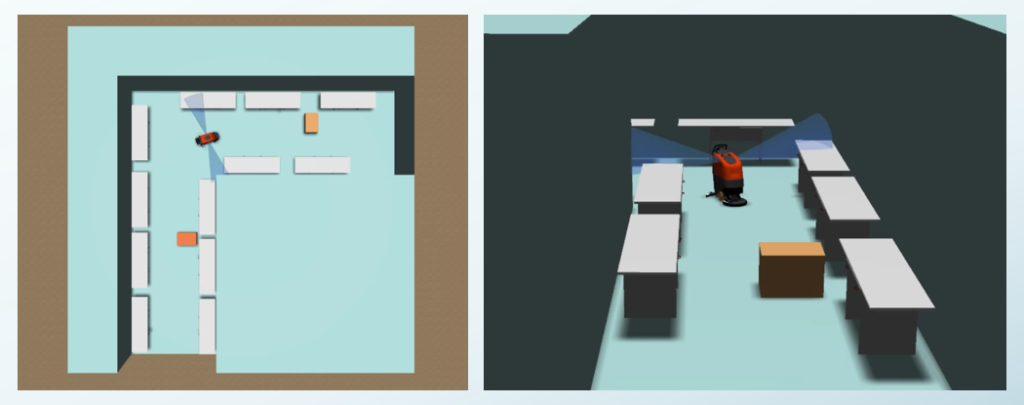

Data flow overview and customized 3D scenes

The state of the disinfecting robot is transferred from Simcenter Amesim to Simulink, which provides the robot updated position and orientation to Simcenter Prescan. Next, Simcenter Prescan provides through Simulink the virtual sensors data to the ROS operating system. Finally, the ROS control algorithm sends the updated actuator commands to the Simcenter Amesim model to follow the right path. Now the control loop is closed, and the disinfecting robot can move by itself within the environment (the hospital room) while avoiding collisions with the detected obstacles.

When it comes to the hospitals or warehouses environment modeling, Simcenter Prescan allows to import of custom objects typical of these applications as CAD files:

- various geometries of autonomous mobile robots (AMRs),

- geometries for rooms disposal, corridors, slopes, beds and obstacles

- harsh conditions implied by the natural environment (day & night, etc.)

So it’s possible to represent the actual configurations and operating conditions regularly encountered by medical disinfecting robots.

Medical disinfection robots: Modeling the disinfecting robot vehicle dynamics and its 3D environment

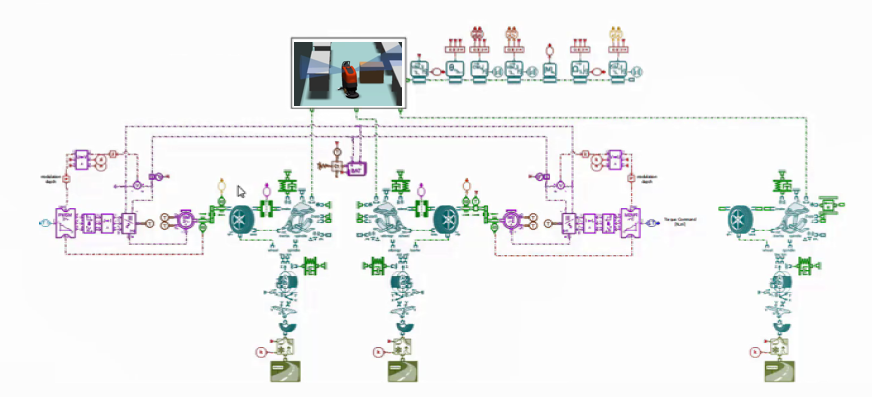

The Simcenter Amesim model predicts the physical behavior and interactions of different subsystems within a 3-wheeled vehicle. It has a front drive, including the electric motors with their inverters, controllers, and the supply 24V battery, with a passive rear wheel. Also, the vehicle dynamics with its chassis axles and tires. We use this Simcenter Amesim digital twin for implementing autonomous driving functions. Then we equip the vehicle with sensor models, and finally, we close the loop by linking the decision algorithm between the simulated sensors data and the vehicle model.

We can investigate multiple scenarios and how the obstacle detection and evasion functions allow the disinfecting robot to self-drive in this unknown 3D environment. The display of the 3D scene with different views helps to well understand the simulation results. For this, we use various camera orientations, as well as the sensor fusion from the ROS library for video processing.

It’s now clear that the combined Simcenter Amesim and Simcenter Prescan solution successfully supports the development and validation of autonomous functions in disinfecting robots.

Gibin Joe Zachariah and Sagar Milind Supe performed the above application case as part of their investigation works. They address new advanced smart products at Siemens Digital Industries Software (DISW) in Michigan, United States. Both are part of the Siemens Simcenter Engineering and Consulting services team.