The electronics manufacturer’s guide to practical data analytics

Smart data as the building block of digitalization

Requirements for increased product quality and reliability contribute to the growing need for meaningful analytics in manufacturing. An MIT study estimates that 70% of data generated in high-tech manufacturing isn’t used. Either the data was not collected properly, or there’s not a way to use the data intelligently to impact the business. When set up correctly, data collection and analysis systems can help manufacturers by acting as the front line of communications and control.

Data is key to companies unlocking the ability to optimize their processes, reduce costs, and accurately measure ROI—all by making good decisions about products, services, employees, and strategy.

Data collection and analysis can help manufacturers:

- Detect and minimize the impact of problems on the final product or process with real-time corrective action

- Gather intelligence during escalation events, enabling engineering to spend time on re-engineering for root cause elimination

Accurate data is required to adjust processes and to ensure quality over time. This is difficult because not all data is in the same format, and not all sensors perform the same over time. How do you know the best data to collect and filter out the junk data from useful or “smart” data?

Roadblocks to electronics manufacturing data collection and analysis

Quality, usable data is integral to smart manufacturing. It enables companies to use data intelligently to drive informed business decisions. Not knowing the best way to read, understand, and apply data can cost your business. Those costs could result in lost revenue opportunities, lower efficiency and productivity, quality issues, and more. Manufacturers that fail to analyze the data from production equipment and adequately predict machine performance and downtime put their manufacturing capabilities at risk. Breakdowns happen suddenly and cause a massive hit to productivity.

Unfortunately, it’s not easy for manufacturers to determine that the correct, usable data is collected. In many instances, the interfaces to data collection sources are complex, and the data lacks standardization. Manufacturers often require vendor assistance to retrieve data from a machine and then need to invest significant time and resources in normalizing the data so it can be utilized effectively in their manufacturing analytics.

The difference between data and analytics

For years, companies collected data in large volumes. The highly varied data from manufacturing operations comes in quickly and needs to be validated and is value prioritized so that it can be turned into something useful – transformed from big data to smart data. Twenty years ago, a PCB work order resulted in 100 data records, megabytes of data and today it is 10 billion records, terabytes of data. The investment in collecting and storing data is high – and without a way to analyze it, the ROI is non-existent.

Even so, the quality of data is essential to providing useful analytics. Bad or non-validated or inappropriately prioritized data will be misleading and a waste of time.

- High-quality data is normalized, formatted and validated so it can be translated and read to provide insights and foresight. It is data that is understood at the point of consumption and be immediately acted on.

- Data analytics is the process of examining data sets to draw conclusions about the information they contain. Manufacturing intelligence means taking big data, applying advanced analytics, and putting it back into manufacturing processes.

Electronics manufacturers leverage analytics in:

- Asset management for accurate, real-time utilization and OEE

- Traceability for capturing and investigating complete material and process traceability data for individual PCBs as well as full system assemblies, using high-availability big-data storage

- Operation and labor management to measure and analyze how resources are spent and track WIP in real-time

- Quality control to identify and analyze process and material failures and drive continuous improvement

- Design-to-manufacturing flow to detect factors affecting yield and point out areas for improvement

Creating usable data: normalizing, interpreting and exchanging

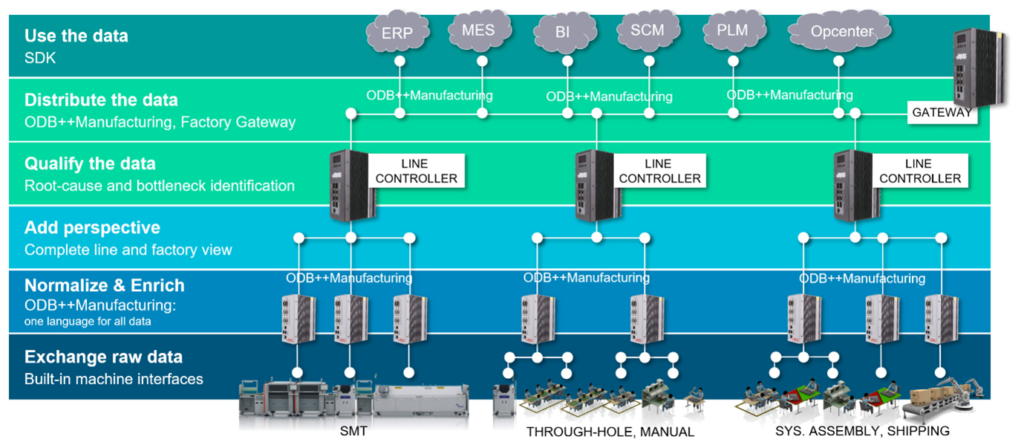

Beyond data collection, normalized data is required to integrate automated processes and computerize human decision-making.

Normalized data is expressed in a single language with a consistent meaning regardless of the source of the information.

To make the data useable from point to point and throughout the systems, it needs to be understood from machine to machine, hardware to software, and system to person. The point of normalization is to make variables comparable to each other. Normalization is the process of reducing measurements to a “neutral” or “standard” scale.

A fully normalized database allows its structure to be extended to accommodate new types of data without changing the existing structure too much. As a result, applications interacting with the database are minimally affected. Normalized relations, and the relationship between one normalized relation and another, mirror real-world concepts and their interrelationships.

The ODB++Manufacturing (ODB++M) machine-language specification defines how information can be exchanged between automated and manual processes and systems, including data format and content. This ability to communicate is essential for a reliable and robust data-exchange environment. With a standardized format, whenever a new machine or process is introduced into any part of the manufacturing operation, it can be immediately integrated without rewriting software interfaces, algorithms, and data definitions. ODB++M is designed to be part of the plug-and-play architecture, for all manufacturing events, such as machine events, material logistics, quality issues, and product flow control.

Collecting the right data – and using it to improve manufacturing

Improving the performance of a factory may look different to various manufacturing stakeholders. With the mix of different customers, products, factories, lines, and machines, there are hundreds of different KPIs for consideration. Some measurements can be complex and require data from multiple processes (such as overall equipment effectiveness). Measuring external forces such as equipment malfunctions, upstream waits, shortage of materials, and operator breaks is critical to provide a complete picture for identifying root causes and actionable corrections.

- Process-specific applications provide performance data about the status of equipment.

- Site-based constraints can be used to qualify any process status based on constraints such as the overall factory schedule, material availability, or the upstream/downstream bottleneck.

With information about process performance and the external constraints influencing production, many optimization opportunities are possible using an intelligence application. The process-specific layer would be able to optimize based on external knowledge from other processes and higher-level applications, while the site-application layer would benefit from detailed process information from each individual piece of equipment.

Examples of applying data in the factory include:

- Feedback: Closed-loop feedback uses data from one process to adjust the operation of another process automatically to maintain a consistent result.

- Finite planning: The SMT schedule can be significantly improved and optimized through automation and computerization.

- Lean material management: Rich, detailed information available in the smart factory, a lean material engine can bridge the gap between the ERP inventory and the shop floor to provide just-in-time (JIT) material logistics to the machines.

- Traceability: Detailed and consistent data can be gathered regardless of the equipment platform.

Learn more about collecting smart, usable data and take the next steps in your digitalization journey in these ebooks: