Engineering simulation part 2 – Productivity, Personas and Processes

Worldwide there are at least 8 million engineers who could benefit from the use of simulation to make better products, faster. Out of those only ~750 thousand use simulation in their product design processes [1]. The engineering simulation market is far from saturation and has been growing steadily at over 10% CAGR for many years.

Productivity

The investment of time and effort in deploying simulation in engineering design is rewarded with an increase in productivity. Productivity is in effect what you are buying from your simulation software vendor. But what is productivity and therefore how might it be further enhanced?

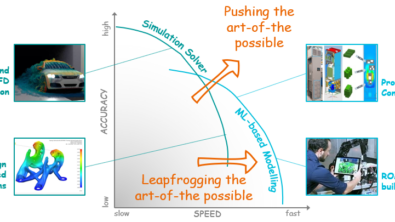

From an engineering simulation perspective, output is measured in terms of what is functionally possible. Most often realised in terms of a range of physical behavioural effects that can be predicted be it thermal, fluid, structural, EM, optical, acoustics. The past 4 decades have seen massive advances in these capabilities and, although innovations continue in some simulation enclaves, the majority of common functional capabilities are now plateauing and becoming commoditised. For CFD for example, one need look no further than the increasing use of open source CAE tools.

Going forward, it’s a reduction in effort that offers the biggest potential for a continued roadmap of productivity enhancements. Not what a simulation tool can achieve, what it can do, but how. So called non-functional abilities covered by a range of abstract nouns such as: stability, usability, repeatability, dependability, discoverability, accessibility, rapidity etc.

Cloud Deployment, Automation, Vertical Apps and Conversational AIs

There are various ways in which effort might be minimised, thus increasing productivity. Barriers to entry start with software accessibility. Cloud deployment with browser GUI access removes the difficulties associated with local installation, licensing, data storage, burst compute power access etc. This cloud approach alone however might be considered guilty of just providing difficult to use software to a wider range of people.

Automation via scripting has gone in and out of fashion over the years. UDF (user defined functions) were always a way to circumvent deficiencies, or rather a lack of implemented abilities available through the GUI. In the last couple of years Python support has become standard in many softwares. Still, the need to script abilities are just a workaround to the fact that the software vendor has not implemented support for your specific workflow. The onus to decrease effort via automation is put on the user in addition to their obligation to maintain that automation should the software vendor change anything in their provided underlying software architecture.

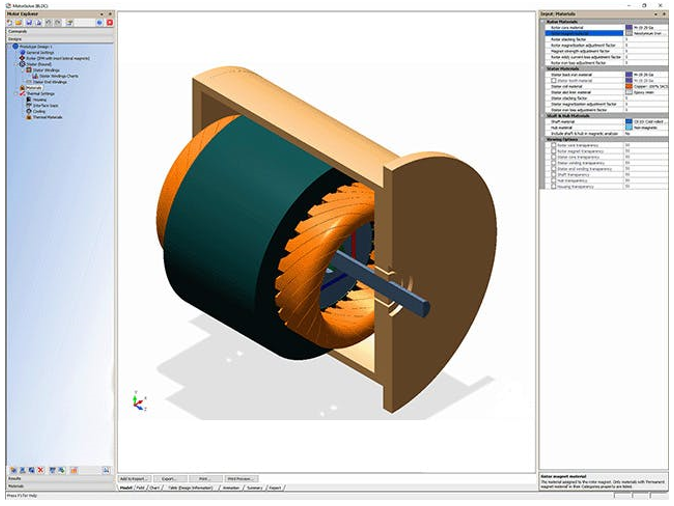

Vertical apps are designed to perform a limited range of simulations, targeted at a specific application. Implicit in that constrained ability is an increase in usability, a better assurance of stability and a reduced need for function discoverability. Sure, such apps are gated to the extent where they can only do one thing, but they therefore do that with ease. Be it tools such as Simcenter Motorsolve (for EM motor design), Simcenter Flotherm (for electronics cooling simulation) or templated apps that can be authored from a general-purpose simulation tool for non-expert usage, vertical apps continue to hold promise for democratisation.

CAE users have to be bi-lingual. They have to translate their desired intent, that is easily verbalised in their native language, into a series of software button clicks and numeric value entries. The user has to translate their intent into the language of the software. To become proficient in such translation takes time and effort. Take this example of a Simcenter Flotherm user who wants to know which PCB components exceed a certain temperature threshold:

12 manual operations are required, and pre-existing knowledge required of where those functions are accessed and how. Considering that such a search query was simply not possible a few years ago, it is indeed a massive step forward in a reduction in effort compared to having to manually datamine the same information. However, it’s still a halfway house. Ultimately, you’d want to have your natural language request understood and acted on by your simulation software directly:

This assigns the obligation to translate your request into actions onto the software itself. Although the above was prototyped using a rules-based system, it is indicative of what natural language conversational interfaces might be capable of.

ChatGPT, since it’s release in December 2022, has revolutionised expectations as to the capabilities of Large Language Models (LLMs). LLMs are nothing new but appear to have reached a turning point, a final corner turned on the way to Turing Test compliancy. Not only a conversational access to information (up to the timestamp of the last piece of data used to train the LLM), but as an agent, a personal assistant who might do your heavy or repetitive lifting. LLMs can be trained to produce code from a given request, which in turn might be executed on a given software. Here a prototype Simcenter LLM demonstration and this is a neat example of a ChatGPT for Blender:

No need to translate your request into a series of button presses yourself, just have your request understood and acted on directly. Despite some reported issues of repeatability and dependability with similar types of ChatGPT interfaces, it’s only a matter of time before the LLMs mature to provide an error free user experience.

Personas

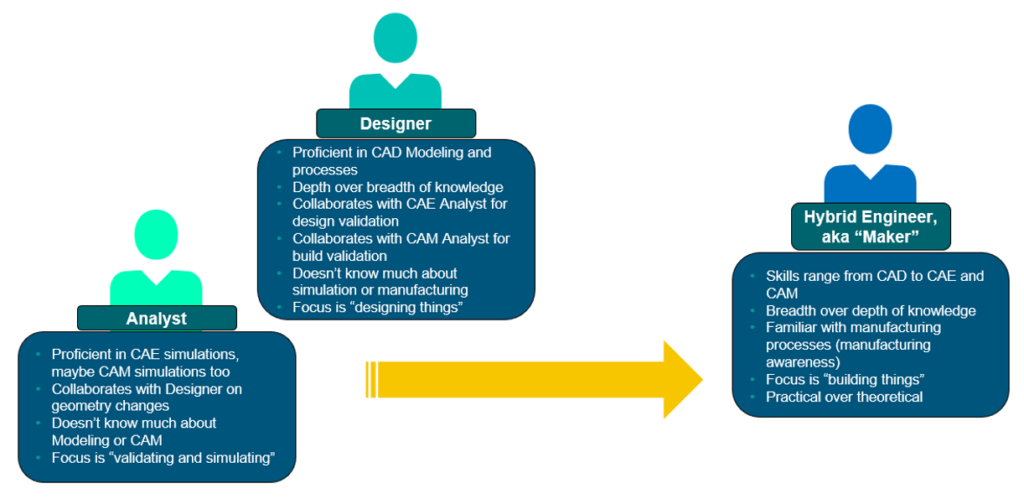

Designers vs. Analysts, it’s a trope and for good reason. The expertise required to unlock the functional capabilities of CAE simulation software as it started to come out of academia in the ‘60s and ‘70s was such that you had to at least have a degree in engineering or computational science, if not a PhD. Analysts, focussing on specific fields of physics, formed competence centres in larger organisations. A range of commercially available software sprung up to serve the needs of these specialists which in turn enable them to support the needs of the designers within their companies.

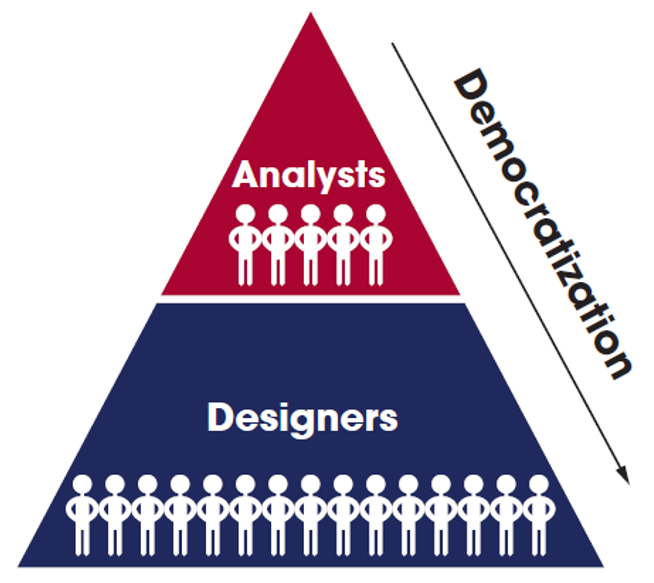

Democratization

‘The action of making something accessible to everyone’.

Whereas simulation analysts enable better products to be designed, they simply do not have the time or resource capability to serve the needs of all design departments to consider the physical ramifications of each and every product design concept variant. The risk being that some non-compliant designs make it through a gated process only to fail during physical prototyping. But what if designers could benefit from simulation, at least to the extent where go/no-go indication could be provided with little effort? This would liberate the specialists to focus on specific challenges that require their unique skills, not bog them down in day-to-day turning-the-handle type simulations.

Democratization of engineering simulation is a common phrase nowadays and implies the adoption of simulation throughout an organisation. Makes good sense for the productivity of that organisation, makes good commercial sense for the software vendors serving that organisation.

One need only look as far as the automotive industry to see a successful implementation of a democratizion roadmap. From the specialist, extremely high maintenance vehicles of 150+ years ago, through to Henry Ford’s first affordable Model T to modern level 4+ ‘eyes-off’ autonomy.

Whereas vehicles started off as the preserve of the well-funded specialists, today and more so in the near future, they will be accessible to all. There is no reason not to assume engineering simulation software should, and will, go the same way.

Today a user of an automobile is focused more than ever on the UX, the infotainment systems, the comfort, much less on the engine (motor) or suspension. In the same way a simulation software user will in the future be more concerned about their ability to use the product, not on what the functional capabilities of the product are.

Makers?

Although large organisations can afford the investment to support a wide range of differing and dedicated engineering and design disciplines, SMEs are more constrained. In lieu of such siloed specialisation, a hybrid engineering role might be envisioned. A ‘Maker’ who has more knowledge breadth than depth and is focused on ‘making things’ as opposed to just designing or simulating or manufacturing them.

As the parallel evolution of the democratisation of manufacture evolves (cf. Additive Desktop Manufacture) in lockstep with that of democratized simulation enabled design, the role of the Maker will become more profound. A decentralization of competences that plays well into agile time-critical industrial design practices.

Processes

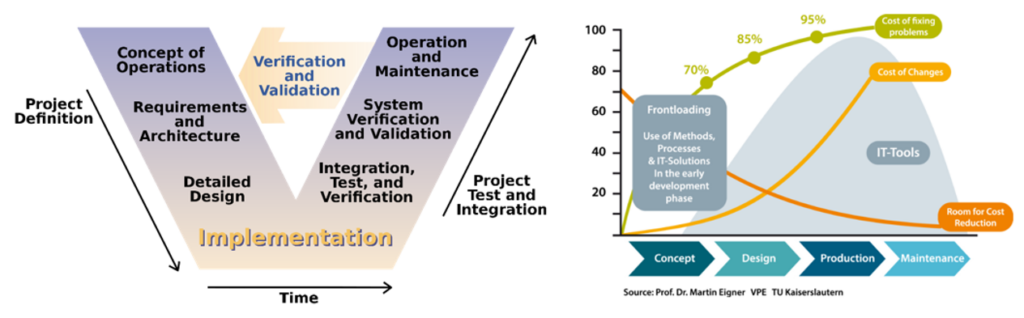

CAE is a toolset that can be applied throughout a product lifecycle. Despite a range of possible applications: from simulation enabled conceptual design decision making all the way through physics-informed manufacturing process parameter optimisation to in-operation health-monitoring executable digital twins, still we find that CAE is most commonly used for design verification. Once the design is detailed, simulation is then used to confirm its operational compliance. And if an issue is found at that stage? Well it’s a bit late in the day to easily (read: cheaply) implement remedial solutions. Whether you call it ‘Shift-left’ or ‘Front-loading’, application of simulation as early as possible in the V-cycle has a very tangible ROI.

Does a product design process ever go smoothly? Are there never any unforeseen issues that are encountered that have to be addressed? I doubt that very much. Although the word ‘mistake’ is quite stark (an act or judgement that is misguided or wrong), nevertheless design mistakes are made. This shouldn’t be considered a deficiency, rather just a reality. The question then is how to minimise their frequency and mitigate their impact.

“Make mistakes early and often, just don’t make the same mistake twice.” (Anon.)

The Agile Manifesto was published back in 2001. Although originally targeted at software development practices, more recently it is being adopted as an engineering design paradigm. It recognises the need for agility, the ability to be able to respond quickly to changing conditions whether they be changing customer requirements or unforeseen engineering challenges.

Whereas product manufacture is bound by the cost of tooling, product development need not be, especially with virtual design where MCAD and CAE is employed in lieu of (or as an adjunct to) physical build and test. A waterfall development process is a Victorian era legacy that has its roots in the cost of ‘mistakes’. Change requests to such a process were considered repellent as they led to time-consuming and costly re-tooling. It’s much cheaper now to run a new simulation than it is to build a new physical prototype which in turn is much cheaper than it is to change a production line.

Embrace change as a fact of life, don’t consider change a ‘mistake’. Otherwise that might give the impression of change being something that you could design out of your processes, point being that it won’t ever be.

But where does this leave simulation as an asset to be adopted by an agile product (or process) development? The opportunity is that simulation can be employed from the earliest inception of a concept idea all the way through to aiding in its manufacture, operation and ultimate retirement. Simulation models are cheap (compared to the alternative), often quicker (compared to the alternative) and play a central role in the digitalisation of human engineering endeavour.

[1] COFES 2015, Malcolm Panthaki https://www.youtube.com/watch?v=-P1jWjTX9I0

Further Reading

- Engineering Simulation Part 1 – All Models are Wrong – “all models are wrong; the practical question is how wrong do they have to be to not be useful.”

- Engineering Simulation Part 3 – Modelling – “‘Everything should be made as simple as possible, but not simple“

Disclaimer

This is a research exploration by the Simcenter Technology Innovation team. Our mission: to explore new technologies, to seek out new applications for simulation, and boldly demonstrate the art of the possible where no one has gone before. Therefore, this blog represents only potential product innovations and does not constitute a commitment for delivery. Questions? Contact us at Simcenter_ti.sisw@siemens.com.