Innovation Fair

Siemens PLM (Tecnomatix) held an Innovation Fair at our offices in Israel last month, a kind of extension of the 3D Party held here last year. This year’s event took us much further down the road to get familiar with, and to experience really innovative technologies connecting us to the ultra-modern digital manufacturing environment.

Touring around the 5 or so “booths” got us immersed in…

Simulation in Virtual Reality

Each participant was invited to put on a special visor and pick up hand-held controls (which resemble small black metal detector wands). This immediately immersed us in a ‘life-size’ surround-around animation. Using one of the hand controls, we could jump to different locations by directing a beam to the spot we wished to move to and then press the trigger to “beam” over. By turning around in the room, we experienced rotating 360 degrees in the virtual scene and could observe the view from any angle, as in real life.

When using the hand controls to select annotation and text tools, they appear in the foreground of the users’ view, which allowed us to mark up the 3D scene. One of the most interesting potentials is the possibility of more than person working at different remote locations, all’entering’ the virtual animated factory and collaborating together “in-person” in the scene.

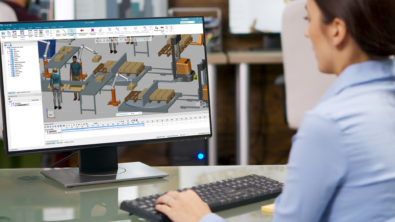

Digital Manufacturing Viewer

This viewer is both a simple and powerful way to see manufacturing information, that could be a really useful way for OEMs to send data and information to their suppliers, for example. The OEM can post simulations with 3D capabilities like zooming, sectioning, viewing PMIs and even allowing the supplier to add annotations.

This viewer can also display Work Instructions from Teamcenter Manufacturing disconnected from the server – EWI Offline – used by shop floor operators and suppliers.

CAM Viewer

One of the exhibits at the Innovation Fair demo-ed a new CAM Viewer, which is still under development. It could serve remotely located machining engineers, making it a breeze for them to collaborate on CNC tool path planning done in NX. One scenario demonstrating its powerful communication potential: one engineer who would like to get input on some questions about a tooling path, can share his or her current view of the path, and send it in a message that opens with this current view and text that the engineer types in. The colleague receives a notification and opens the message (on his or her laptop or mobile device to see the captured view and message. They can then respond with information, like a screen grab from their NX scene send their current view back with some text added.

To see the Factory – Intosite

The Innovation Fair had an exhibit demo-ing a Google Cardboard-based solution which interfaces Intosite (using GoogleMaps) with OpenGL. Holding up a smartphone encased by Google Cardboard in front of our eyes, we entered a panoramic (360 degree) picture that we could move around in by turning our heads. The Intosite view was loaded with layers of data, like info about room names, an inventory of the furniture there, access to user manuals, names and facts about the people in the place, what machines were in use there, and more. Looking around the place, our vision would fall upon pre-located 360 placemark icons, and by fixing our gaze upon one for a few seconds, the current scene would give way to a new panoramic environment. Once again, we could explore the new ‘place’ by turning our head to all angles, and discover much embedded information about the new scene.

HoloLens

At the HoloLens corner, we were flanked by two large plasma screens behind us on the wall. And the organizers actually had two different demonstrations running there, and when we donned the oversized HoloLens visor to experience them, what we saw was transmitted at the same time to the screens.

In one demo there was a hologram of a Siemens controller, which seemed to be suspended in front of us. Wherever we focused our visor-ed gaze, from any side, or top and bottom, our perspective of the unit changed, and was displayed on the large screen behind us. We were moving around, crouching under and peering over the virtual object made visible to our eyes by the visor. But it wasn’t only about 3D vision – they gave us a sheet printed with simple commands, and by reading one aloud, we initiated a simulation of a simple assembly procedure, made it continue to the next stage and have it run in reverse. And we could also enlarge or zoom out the view of the object by declaring “bigger” or “smaller” and have the hologram turn around by saying “rotate object”.

The second demo was a 3D life size assembly animation, observed through the visor, that we could move around and also issue simple voice-based commands to change the view, which was all the time appearing on the screen, exactly as we were viewing it.

And just to hammer home the fun in all of it, everyone who signed in and experienced all of the exhibits was treated to a present of… a Cardboard unit for our smartphones!