From ‘delve’ to neural operators: How AI is reshaping our writing and our CFD

How AI linguistic patterns mirror the rise of AI-Accelerated CFD

Tools that shape us

We shape our tools and thereafter our tools shape us.

– Father John Culkin, paraphrasing Marshall McLuhan (1964)

Last month, I caught myself writing “let’s delve into the pressure distribution” in a technical email. I stopped mid-sentence, startled. In twenty-five years of Computational Fluid Dynamics (CFD) work, I had never once used “delve” in professional correspondence. Yet there it was, flowing naturally from my fingertips.

The same phenomenon is playing out in engineering, where AI-Accelerated CFD is reshaping not just simulation workflows, but how engineers think about models, speed, and possibility.

McLuhan’s observation wasn’t about AI, but he might as well have been describing the current era. Large Language Models (LLM) are reshaping how we write. Not through force or regulation, but through something more subtle: they’re making certain words and phrases feel right to us. They’re installing new linguistic reflexes.

Open almost any model announcement from 2023–2024 and the title ends with ‘…showcases’.

Scan 2024 academic papers and “delve” multiplies like bacteria in a petri dish. Read anything from early 2025, and dashes appear where commas once sufficed. Each AI generation leaves its fingerprints across millions of documents, and we, the writers, absorb these patterns unconsciously.

I started cataloging these shifts. What began as mild curiosity evolved into a taxonomy: the linguistic eras of AI. Distinct periods where specific vocabulary explodes across the internet, saturates technical discourse, then fades as the next model architecture introduces new verbal tics.

Then I noticed something unexpected. My field, Computational Fluid Dynamics (CFD), was experiencing identical waves. Not linguistic waves, but methodological ones.

Physics-Informed Neural Networks (PINNs) dominated every conference in 2020, before fading as their limitations became clear. By 2023, AI Surrogate Models had captured the spotlight. Today, Reduced Order Models appear in virtually every funding proposal and journal submission. Each technique brings its own vocabulary burst: “projection-based ROM,” “neural operators,” “manifold learning,” “hyper-reduction.”

The CFD side reveals the same dynamic: methodological eras transforming how we simulate. We built PINNs to solve fluid equations faster, and PINNs changed how we conceptualize the relationship between neural networks and physics. We built language models to assist our writing, and they’re rewiring our prose style. Tools shaping their shapers.

This post maps both territories: from the vocabulary quirks leaking into your emails to the Fourier Neural Operator that cut a building’s energy bill by 35%. The linguistic eras transforming how we communicate, and the methodological eras transforming how we simulate. Two revolutions, one principle: McLuhan was right.

The linguistic eras of AI

Each AI generation leaves measurable linguistic fingerprints across millions of documents:

| Era | Period | Viral token(s) | Trigger date | Measurable spike |

| Prompt | 2021 – Oct 2022 | “As an expert…”, role-play prompts | GPT-3 API (mid-2021) | Prompt repos >10× |

| Hallucination | Nov 2022 – mid 2023 | “hallucination(s)” | ChatGPT (30 Nov 2022) | Google searches >50× |

| Showcase | 2023 – mid 2024 | “showcase(s)” plural | GPT-4 (14 Mar 2023) / Claude 3 (4 Mar 24) | Default demo title |

| Delve | Sep 2024 – Feb 2025 | “delve / delves deeply” | o1-preview (12 Sep 2024) | 68× increase 2020-2024 in PubMed (Juzek & Ward, 2025; preprint 2024) |

| Dash | Jan 2025 – present | “—” visible separators | o3 (16 Apr 2025) / Claude 3.7 (24 Feb 2025) reasoning traces | 90% of answers |

(A quick note: when I say “era”, I’m not claiming the old vocabulary vanished. It simply stopped dominating the discourse, just as people still say “showcase” today, even though its peak has passed. The Prompt and Hallucination Eras represent a different mode of influence: rather than adopting AI’s style, we created vocabulary to interact with and describe AI. Yet this too is tools reshaping language. The medium generating its own discourse).

The pattern is unmistakable. Each model release introduces vocabulary quirks that leak from training data and system prompts into human writing. We absorb these patterns unconsciously, then propagate them across technical forums, academic papers, and professional communications.

The Prompt Era established that careful phrasing improves AI outputs. The Hallucination Era taught us to verify everything. The Showcase Era normalized breathless demonstrations. The Delve Era made a fantasy-novel verb ubiquitous in technical writing. The Dash Era is teaching non-English engineers punctuation they’d never used before.

The AI-Accelerated CFD revolution: five distinct phases

Computational Fluid Dynamics (CFD) underwent its own AI transformation, one measured not in words but in wall-clock time, mesh resolution, and parametric design space exploration.

Here too, the tools we built began shaping how we think about simulation.

Phase 1: The PINNs Era (2019-2022)

Physics-Informed Neural Networks (PINNs) burst onto the CFD scene with a compelling promise: embed the Navier-Stokes equations directly into neural network loss functions, reducing the need for massive labeled datasets.

The appeal was immediate. Traditional CFD required extensive mesh generation, iterative solver convergence, significant computational resources, and expert knowledge to set up simulations.

PINNs theoretically offered a shortcut: train a network to simultaneously satisfy governing equations and boundary conditions, then query solutions at arbitrary points in space and time.

We shaped PINNs to solve fluid dynamics, and PINNs began shaping how we thought about the relationship between neural networks and physics.

Reality check: PINNs excelled in specific scenarios (simple geometries, well-posed problems, cases with limited experimental data). However, they struggled with complex, turbulent flows where traditional CFD already performed well, problems requiring fine-scale resolution of boundary layers, multi-physics coupling with disparate time scales, and generalization beyond training domains.

The era taught the CFD community an important lesson: physics constraints help, but they don’t eliminate the need for high-quality training data or appropriate inductive biases.

Phase 2: The Surrogate model Era (2022-2024)

As PINNs limitations became apparent, attention shifted toward AI surrogate models, fast approximations that predict specific engineering quantities of interest (lift, drag, heat transfer coefficients) without resolving full spatial fields.

Surrogates accept the need for high-fidelity CFD data but aim to minimize the number of expensive simulations required to build a valid approximation across the design space.

Multiple approaches competed:

- Gaussian Process Regression offered excellent uncertainty quantification but was limited to hundreds of design points.

- Polynomial Chaos Expansion provided fast evaluation but struggled with high-dimensional parameter spaces.

- Neural Networks were flexible but required careful architecture selection and regularization.

Some commercial CFD vendors integrated surrogate capabilities, promising “1000× speedup” for parametric studies. While marketing numbers varied, real applications demonstrated genuine value: an aerospace company could explore 10,000 airfoil variants in hours rather than months.

We built surrogates to accelerate design exploration, and surrogates changed how we conceived of design space optimization, no longer limited by computational budget.

The limitation: Surrogates were black boxes that needed careful construction. You could evaluate them quickly, but understanding why a design performed well, or safely extrapolating beyond training data, remained challenging.

Phase 3: The ROM renaissance (2024-Present)

By 2024, the limitations of surrogates, fast but blind to full-field physics, became clear. The next wave was inevitable: Reduced Order Models (ROMs). Unlike surrogates, ROMs compress the entire solution manifold rather than approximating isolated quantities of interest, enabling real-time predictions of complete flow fields. ROMs represent the current frontier, combining the best aspects of physics-based simulation and data-driven acceleration. A transient CFD simulation might generate terabytes of flow field data, but the essential dynamics often live on a much lower-dimensional manifold.

Key ROM techniques transforming CFD:

- Proper Orthogonal Decomposition (POD): Identifies dominant spatial modes in flow fields through singular value decomposition. A thermal simulation of a battery pack with 50 million cells and 1000 time steps might be accurately represented by 20 POD modes, achieving a compression ratio of 2,500,000 to 1.

- Dynamic Mode Decomposition (DMD): Extracts spatio-temporal coherent structures with associated frequencies and growth rates. Perfect for oscillatory flows such as vortex shedding or combustion instabilities.

- Neural Operators: Unlike traditional neural networks that map finite-dimensional vectors, operators map entire functions to functions. Fourier Neural Operators (FNO) and Deep Operator Networks (DeepONet) learn solution operators for PDEs, enabling zero-shot generalization to new geometries or boundary conditions. This is the framework behind Simcenter Physics AI, now integrated into Simcenter STAR-CCM+ Design Manager (release 2602).

- Hyper-reduction: Techniques like the Discrete Empirical Interpolation Method (DEIM) reduce not only the solution space but also the computational cost of evaluating nonlinear terms, enabling real-time-capable models. We shaped ROMs to compress physics, and ROMs are now shaping how we conceptualize the relationship between high-fidelity simulation and real-time prediction. The Chalmers HVAC example in the next section demonstrates this transformation in action.

Phase 4: Active learning integration (today)

This cutting-edge approach combines ROMs with active learning. The active learning algorithm identifies regions of high uncertainty in the design space, automatically triggers new high-fidelity CFD runs, and iteratively refines itself through a feedback loop.

This closes the gap between fast exploration and accurate validation, delivering semi-autonomous design optimization in real industrial projects. Active learning effectively acts as an automated self-improvement Design-of-Experiments process, guided by meta-models such as ROMs and surrogates, making intelligent decisions about where to sample best in a design space. A principle already at work in Simcenter HEEDS, whose SHERPA algorithm applies this logic today.

Phase 5: Digital twins that learn (emerging now)

We have been shipping digital twins for years. Each generation capturing more complexity as data, models and compute improved.

What is new and rapidly scaling in 2026 is the ability of these twins to continuously retrain themselves on live sensor data and operational conditions without human intervention. This evolution turns a static replica into a genuinely predictive, self-evolving system. A digital twin of an aircraft engine, built on ROMs derived from high-fidelity CFD thermal and flow simulations, doesn’t just replicate design-point performance; it adapts based on real flight data, predicting maintenance needs before sensors detect anomalies.

Reduced Order Models (ROMs) transforms CFD

Consider a real-world application from a 2025 master’s thesis at Chalmers University: intelligent HVAC control for building energy efficiency.

The traditional challenge: HVAC systems consume massive amounts of energy in buildings worldwide. Optimizing them requires understanding complex 3D airflow patterns, temperature distributions, and their interaction with control strategies. Real-time CFD simulation is computationally impossible for building control systems.

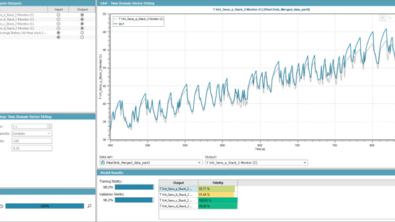

High-fidelity CFD approach: Researchers created 973 steady-state Simcenter STAR-CCM+ simulations covering realistic boundary conditions. Each simulation captured complete 3D temperature and velocity fields. Running these simulations in real time for adaptive control? Computationally infeasible.

ROM approach using Fourier Neural Operator (FNO): The team trained an FNO surrogate model on the CFD dataset. FNO is an open academic architecture (Li et al., 2021); Simcenter STAR-CCM+ provided the training data, not the solver. The results were remarkable. The neural operator reproduced full temperature and velocity fields in milliseconds, with >90% of points within 5% relative error. Median prediction errors: 0.0169°C for temperature, 0.0034 m/s for velocity.

Real-world impact: With the fast FNO surrogate enabling real-time physics-informed predictions, a Soft Actor-Critic reinforcement learning controller achieved 35% energy cost reduction compared to conventional PID control. Annual consumption dropped from 7,460 kWh to 4,836 kWh. The savings persisted across all seasons, with thermal comfort deviations beyond ±2.5°C occurring only 2.3% of the time.

(Source: https://blogs.sw.siemens.com/simcenter/whats-new-in-simcenter-reduced-order-modeling/)

This transformation, from computationally infeasible to real-time intelligent control, explains why ROMs and neural operators dominate current CFD research. The gap isn’t just about speed. It’s about enabling entirely new applications that were previously impossible.

The terminology is leaking everywhere. Open any 2025 CFD conference proceedings and you’ll find:

- “Projection-based ROM with hyper-reduction”

- “Non-intrusive reduced order modeling”

- “Manifold ROMs with physics constraints”

- “Neural operator learning for parameterized PDEs”

These phrases appear with the same ubiquity that em-dashes now mark AI-generated reasoning traces. We built the tools, and the tools are shaping our technical vocabulary.

Real-world impact in Simcenter STAR-CCM+

This evolution isn’t theoretical speculation. Modern CFD platforms are shipping AI and ROM capabilities:

Design Manager integration: Connects high-fidelity CFD with surrogate-based exploration, enabling engineers to navigate design spaces orders of magnitude faster than traditional parametric studies.

POD-ROM workflows: Transform gigabyte-scale transient simulations into megabyte-compressed models while maintaining accuracy for subsequent parametric variations.

Adjoint solvers: Provide the gradient information that feeds AI-driven active learning loops, enabling intelligent identification of high-value design points across massive parameter spaces.

Fidelity-adaptive control: Toggle between reduced-order speed and full-order accuracy based on confidence metrics, the CFD equivalent of reasoning models switching between fast and thorough analysis modes.

Simcenter PhysicsAI (2602): AI-powered predictions replace hours-long CFD loops during early design phases, letting engineers focus high-fidelity simulations only on the most promising concepts. Train models from existing simulation data directly within Design Manager, compare AI predictions side-by-side with full CFD, and iterate at the speed of design. (Read more)

The tools exist today. The question is whether the CFD community will adopt them as rapidly as the internet adopted AI linguistic patterns.

Running with the red queen

Now, here, you see, it takes all the running you can do, to keep in the same place. If you want to get somewhere else, you must run at least twice as fast as that!

– Lewis Carroll, Through the Looking-Glass

The linguistic eras of AI, from prompts to hallucinations to showcases to delves to dashes, demonstrate McLuhan’s principle at internet scale. We built language models, and they’re reshaping how millions of people write.

The same pattern plays out in CFD. We built PINNs, surrogates, and ROMs to accelerate simulation, and these tools are fundamentally changing how we conceptualize the design process, the relationship between computation and experimentation, and the very nature of engineering analysis.

Engineers must now run to keep pace with evolving vocabulary, both linguistic and technical. Remember when we waited three days for a single design point? Remember when PINNs were going to replace traditional CFD?

The gap between AI eras shrinks: years for PINNs, months for surrogates, weeks for new linguistic patterns—days for the next weird punctuation habit (pun-dash intended).

Already, 2025 gave us “vibe coding”: an entire development philosophy named, adopted and debated within weeks, while 2026’s keyword is “agentic”: AI systems that don’t just generate text or predictions but act autonomously. The vocabulary never stops arriving.

Staying current requires constant learning, adaptation and willingness to let our tools reshape how we think.

The question isn’t whether this will continue. It’s whether we’ll run fast enough to shape the tools wisely before they shape us completely.

Join the conversation: Share your experiences with AI-Accelerated CFD, ROM implementations, or observations about AI linguistic evolution in the comments below. What vocabulary shift have you noticed in your own technical writing? What era are we entering next?

References

Juzek, T. S., & Ward, Z. B. (2024). Why Does ChatGPT “Delve” So Much? Exploring the Sources of Lexical Overrepresentation in Large Language Models. https://arxiv.org/abs/2412.11385

Venkatachalam, S., & Gopalakrishna, R. (2025). Dynamic HVAC Control Using Machine Learning and CFD. Master’s thesis, Chalmers University of Technology.

Amine-Eddine, G. (2024, April 29). What’s new in Simcenter Reduced Order Modeling? Siemens Blog. https://blogs.sw.siemens.com/simcenter/whats-new-in-simcenter-reduced-order-modeling/