Effective Embedded Software Development in the Midst of Exploding Complexity

Mechanical and electrical engineers have traditionally relied on engineering physics to design, build, and test novel devices and processes. They focus on putting together various functions in innovative ways to solve a problem, like an intricate physical puzzle. A puzzle, that is, that could boost efficiency and performance in machines ranging from an automobile high-traction motor to an urban air taxi.

Smarter Products Need More Software

More and more, today’s products and systems are designed to be smarter and more connected than ever before. Companies are now producing that same automobile high-traction motor with a system to notify the driver, and perhaps even the auto manufacturer, that it is time to service the vehicle. And that urban air taxi? Manufacturers now build in an electronic control system to report on its operations and identify problems before any failures might occur, powering predictive maintenance.

Simply put, the amount of embedded software in today’s products, both in the consumer and industrial realms, is on the rise. In fact, 68% of respondents in Lifecycle Insights’ Engineering Executive Strategic Agenda Study reported that the complexity of on-board or embedded software is increasing or increasing greatly, the highest of any engineering domain. That’s why it is essential to understand some of the key advances in embedded software development that can mitigate the risks of this explosive growth.

Multi-Domain Systems Planning

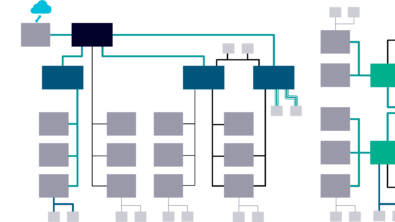

As products get more complex, the components of the electronic/electrical (E/E) system must communicate in a tightly coordinated fashion to deliver intelligent features. This communication must be realized through the careful and planned integration between the embedded software, electronics, sensors, actuators, electrical distribution networks, and more.

Oftentimes, engineering teams focus on which ECU will run which software modules. While it’s true that you can shift functions and associated modules around from ECU to ECU, you can’t do so without considering other factors. For example, say you moved a function enabled with embedded software from one ECU to another. That change could overload the receiving ECU’s processor or the network’s bandwidth. A decision based on software considerations alone could result in catastrophic results.

In contrast, three capabilities used in concert make a tangible difference.

- The ability to explore different architectures by moving functions and associated software modules around from ECU to ECU

- The ability to get insight into the impact of such changes on performance measures like processor and network bandwidth utilization.

- The ability to frontload quality assurance to identify and resolve issues earlier in the software development process.

You can make more fully informed decisions about where different software modules should be placed to optimize the system and software architecture as a whole. Using this approach is time well-spent. It helps to avoid any integration problems during prototyping and test phases. In the end, you save time, money, and resources, keeping projects on time and budget.

Frictionless Handoff from Systems to Coding

Too often, electrical/electronic engineers and software developers work in silos. Requirements can be lost in translation as groups pass information around. The entire design team must understand which software module fulfills which requirements and functions. A mutual understanding of requirements acts as the contract between systems engineers, software architects, and developers and helps to avoid unnecessary friction during hand-off.

Development teams that take a higher-level approach to E/E systems and software architecture can make sure to consider all requirements during design. But it’s not enough that the requirements and functions fulfilled by each software module are clearly and unambiguously defined. It’s also crucial to clearly and unambiguously communicate the requirements to the developers who will develop the software within those modules.

E/E systems are only becoming more complex. Managing the complexity requires careful planning for those important exchanges of data across different software modules. For the system as a whole to work successfully, sensors sending messages to an electronics controller must show up on time and in the proper format. Determining what data is communicated and how often between different electronics and software modules is another vital piece of the development process. It’s critical to eliminate the potential for miscommunication and misinterpretation about how the system’s components, including software ones, will work together to fulfill system requirements.

Progressive Virtual Verification

Once requirements have been defined and communicated, the next step is to leverage progressive virtual verification processes to test the system at different levels of fidelity. There are three elements to this effort.

- You can verify the software model’s logic and performance with a Model-in-the-Loop (MiL) test, in which you connect the software model to a simulation of the rest of the product.

- Follow that with the second stage of virtual verification, Software-in-the-Loop (SiL) testing. Here, you continue to test your design by compiling the software into binary code and running it on a virtual version of the integrated circuit (IC), an entire circuit board, or the network with multiple electronic endpoints. In this way, you can test the model with a 1D system simulation, verifying that everything works as it should even when moving from the model to the compiled software.

- Finally, you can move on to Hardware-in-the-Loop (HiL) testing. This last stage of the virtual verification process allows you to run the compiled software on prototype target electronics.

By working through these three stages one at a time, you can test that all systems work as intended before committing to any costly physical prototyping. This progressive virtual verification processes enables you to optimize your system designs, catching any potential problems before moving a physical build. Forward looking organizations automatically run a percentage of those tests on periodic builds of the software, where test cases and requirements are well defined. In this way, you can avoid costly integration issues and their associated delays. But just as importantly, engineers can test their designs, quickly and easily, far earlier in the development process. This uncovers issues when engineers can address them more readily.

The use of embedded software in smart, connected products will only continue to grow and evolve. By thoroughly considering these modules during the design process and vetting them carefully during the test phase, you can ensure the success of tomorrow’s connected systems.

Recap

- The amount of embedded software in today’s products is on the rise. This trend isn’t going to slow down, so companies will continue to call on engineers to add intelligent features into tomorrow’s designs.

- System complexity is also on the rise. Because of this, development teams need to engage in multi-domain systems planning from day one. Any decisions regarding E/E system-level designs can impact every networked device or object in your system. It is critical to explore candidate software architectures while gaining insight into the impact of design decisions.

- To ensure frictionless hand-off between embedded software and application development, it is critical to:

- clearly define the requirements for functions fulfilled by each and every software module, and

- unambiguously communicate these requirements to the developers who will program the modules.

- After you have completed your design, but before you commit to a release, it is important to test the software model’s logic and performance using progressive virtual verification processes. This helps avoid many rounds of prototyping and testing. It also shifts some QA load earlier in development, where engineers can quickly and easily fix issues. Doing so can help you avoid common integration pitfalls and save time, money, and resources.

This is part three of three guest posts by Chad Jackson, who is the Chief Analyst and CEO of Lifecycle Insights. He leads the company’s research and thought leadership programs, attends and speaks at industry events, and reviews emerging technology solutions. Chad’s twenty-five-year career has focused on improving executives’ ability to reap value from technology-led engineering initiatives during the industry’s transition to smart, connected products.

You might also be interested in:

- Part 1 of blog series: An Advanced Approach for E/E Systems Development

- Part 2 of blog series: Generative Engineering: Automation for Electrical Distribution Systems