Safety-Critical Verification in DO-254

I recently read a novel that involved the investigation of the crash of Marine One, the U.S. President’s helicopter. The main character, a former marine helicopter pilot who was investigating the accident, described a helicopter as “60,000 parts flying in formation yearning to be free of each other.” The same could be said of airplanes, rockets and any other flying system. Of course, our job is to make sure that the formation holds together because if it doesn’t, then really bad things could happen.

In our most recent Wilson Research industry survey, we discovered that 78% of FPGA design projects had bugs that escaped to production, including a truly alarming 75% of safety-critical designs. We also found that only 28% of safety-critical ASIC designs achieve first-pass silicon success. Clearly, the verification process for safety-critical designs needs improvement. The DO-254 Standard attempts to establish “design assurance” guidelines to achieve this.

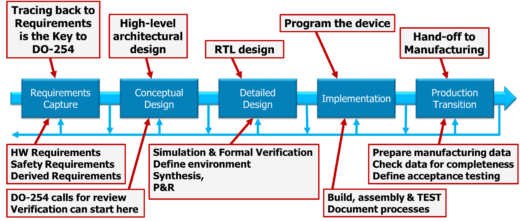

DO-254 breaks the hardware design life cycle down into five separate steps:

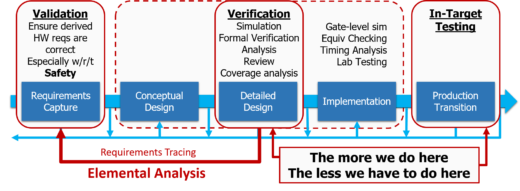

The “design assurance” activities applied at different parts of the flow fall into three separate categories:

- Review: a manual process of reading code or examining documentation (block diagrams, schematics, etc.) to determine correctness

- Analysis: a partially- or fully-automated process in which software tools are applied to examine the functionality, performance and/or safety implications of a hardware item and its relationship to other functions to provide evidence that a requirement is correctly implemented

- Test: a manual or automated process to confirm that the hardware item correctly responds to stimuli in its intended operational environment.

The key to improving verification is to apply “analysis” to as much of the design flow as possible. Reviews often rely on how closely the participants are paying attention and can hardly be used as proof that the design is correct (with the possible exception of one engineer I worked with many years ago who was able to achieve first-pass success on a complicated design without the use of simulation, but he is clearly an outlier). There are certain things that have to be tested in the final hardware system, like ensuring proper pinout connectivity, register access tests and other things to validate that the hardware system was built properly. The analysis process helps us ensure that the right thing was built.

The requirements are really the heart of the whole process. The validation of requirements is still essentially a manual review process, but it’s critical that we have a tool like ReqTracer that allows us to correlate all verification activities against those requirements. While most verification will be done in the Detailed Design step, mostly on the RTL design but also including gate-level simulation and lab testing, it’s clear that the more we can do at this stage the less we have to worry about for in-target testing. The way to improve safety-critical verification is to apply advanced tools, methodology and automation as much as possible while having a clear correlation of every piece of the design to a set of tests and back to the initial requirements so that we can continuously monitor our progress throughout the process.

I’d love to hear from you about the safety critical verification challenges you are facing, so please comment below or join me online, Thursday November 2nd, for a webinar where I’ll provide “An Introduction to DO-254 and Advanced Verification.” For more information at to register for the webinar, please go here. I hope you’ll be able to join us.

Comments