Data Excellence Through Data-Centric AI

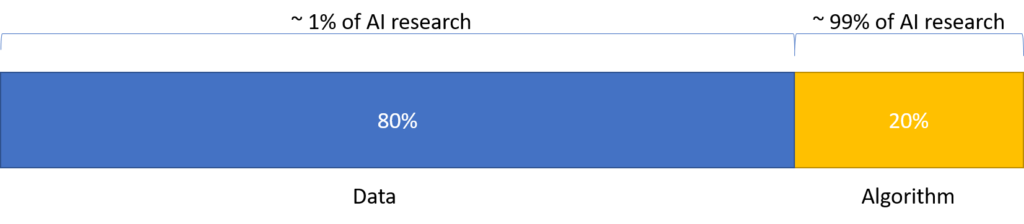

In the last 6 to 9 months, a new phenomenon grabbed a lot of attention in the AI community, thanks (again) to Dr. Andrew Ng, called Data-Centric AI. As we know, data and algorithms are paramount for building an AI system. Machine learning algorithms feed on data. Also, working with data is a significant part (~ 80%) of a machine learning project compared to the modeling work (~ 20%). Still, a study that looked at recent research work revealed that 99% of the research focuses on improvements to the modeling aspect in machine learning. With merely 1 % of research on the data aspect, there is a need to draw the attention of the AI community to fill this immense void.

Data Scarcity

Another trigger point for the need to pay special attention to the data is an urge to apply AI to the industry. Unlike big internet companies, the datasets needed to solve the industry use case are non-existent or small. As AI practitioners for industrial AI, we often face data scarcity, and squeezing more out of the scarce resources is probably the only way around.

Model-Centric vs. Data-Centric

In a typical machine learning project lifecycle, after the initial data preparation and exploration, the attention is on model training and parameter tuning most of the time. The data is held fixed in such a “model-centric” setting, and iterations are performed over model code/algorithms to improve performance. Although we can not undermine the importance of innovative modeling architectures responsible for the tremendous success in AI in recent years, the returns in terms of performance improvements start to diminish after a certain point. Dr. Ng bats for a data-centric mindset while executing your machine learning project.

Hold the code/model/algorithm fixed and iterate over data to improve the performance.

Dr. Andrew Ng

The data-centric mindset can be stimulated by questions like

- How do I define and collect high quality data?

- How to modify data instead of code for robust performance?

- How to monitor the data and its critical subsets to mitigate the data/concept drifts?

- How do I ensure consistent, high-quality data throughout the lifecycle of a machine learning project?

Commoditization of AI

The availability of state-of-the-art, push-button models (TF Hub, PyTorch Hub, Hugging Face, etc.), low-code machine learning frameworks (Ludwig, Overton, etc.), and commoditized architectures like Transformers (for both vision as well as language) have made this feasible more than ever. The excitement around data-centric AI is also gaining momentum due to the realization that compared to tweaking model architecture and parameters, more lifts in performance are coming from a deep understanding of the data. Also, due to the AI skills shortage, custom model architectures are difficult to maintain and extend in a production environment.

Compared to tweaking models, more gains are coming from deep understanding of the data.

Data-Centric AI is an effort to systematize a sustainable way of putting machine learning to work on real use-cases by helping AI/ML practitioners understand, program, and iterate on data instead of endlessly tweaking models. So, instead of asking “how do we construct the best model,” we probably need to ask, “how do we feed best (quality) data to these models.”

If you think about it, these concepts have been around for quite some time but practiced in an ad-hoc manner. The excitement around data-centric AI should galvanize the researchers and companies to focus more attention on building data-centric tools and frameworks, enabling a systematic and principled approach towards data excellence by improving data quality throughout the lifecycle of a machine learning project.