The application of Model-Based Systems Engineering – ep. 5 Transcript

In this fifth and last episode of the model-based systems engineering (MBSE) podcast series, I am joined again by Tim Kinman, Vice President of Trending Solutions and Global Program Lead for Systems Digitalization at Siemens Digital Industries Software. We are also continuing to speak with Matt Bromley, Vice President, Product Strategy and Technology, and Mark Malinowski, MBSE Solutions Director – Siemens EDA.

The experts today discuss the challenges that can be solved by automating tasks within the design process. We will also learn about the benefits of continuous verification which impacts product design adaptability. Lastly, we discuss the future of MBSE and the role that increased complexity will play in the world of electronics.

Read the transcript (below) or listen to the audio podcast.

Read the podcast transcript:

Nick Finberg: Welcome to our fifth podcast in our series on Model-based Systems Engineering or MBSE. We are again interviewing Tim Kinman, Vice President of Trending Solutions & Global Program Lead for Systems Digitalization at Siemens Digital Industries Software. And we are continuing to talk with Matt Bromley and Matt Malinowski also of Siemens, discussing the impact of MBSE in the aerospace and automotive industries.

All right. So, you must digitalize, and you have to make sure everything’s accountable. Are you guys working towards automatable as well? I know many other domains are trying to figure out ways to make certain structures without having to devote any engineering time to it. I’m sure with having billions of transistors, you have some amount of that. But can you talk a little bit to it?

Matt Bromley: I can talk a little bit to that in the board space. And I think there’s a couple of levels of automation which are necessary as we move forward. I think we should start off small and talk about automation around verification that’s very common in the software world; or being able to take a set of requirements that match the architectural breakdown that have been parameterized in a way that we can then automate the verification of what would be a big step forward. We’re close in a few areas, but we still have a lot of human-enablement in the loop in that process. At the end of the day, when I come back and say, “I verified this board,” that’s really reliant on a group of specialized individuals saying, “Yes, it’s going to meet those requirements.”

We need to move that to being more automated for multiple reasons. Firstly, they are generally very expensive resources are kind of scarce. The more we can automate, the more we can make sure that that is guaranteed up front. Secondly, is design automation and how we can maybe bring in some new technologies around AI and ML, that’s a little bit of a different approach. But we’re certainly looking at it and how that can be both leveraged to increase designer productivity and how it can be used to increase simulation accuracy. So, how can I see what I need to simulate rather than looking at an entire design and say, “Hey, let me just try and simulate the entire thing, which is a little bit more practical maybe, and the regularity of the IC side and on the board side.” Also, how can I use AI to work out what I need to simulate? Because that has proven to be the areas that need to be simulated in prior examples.

Nick Finberg: So, you are almost using it to find the edge cases.

Matt Bromley: Yes, exactly. And then kind of the holy grail of how can you start looking at it to do generative design? How from a set of requirements can we start moving into generative design? But of course, that’s a little bit further away.

Mark Malinowski: Clearly, there’s a spectrum of automation-based on efficiency improvements. If we just look at things in the near term that we think we can attack as we are refining architecture, as Tim keeps highlighting, what are the attributes of elements of those architectures that will enable the specification and constraint of the design of those elements and implementation of those elements in such a way that it facilitates verification? That’s an area that needs more work. We’re starting to scratch the surface on that in enhancing the architecting tools so that, number one, we just highlight to the author, the architecture doing the refinement, that those attributes are important and necessary, and how they need to be put in there to leverage and semi-automate their use. So, that’s an area that we’re going to tackle first as we try to build this commonality across the electronics domains of architecture.

Nick Finberg: Almost like you’re using autopilot for verification, in that you have your end state that you know you need to hit. So, how do I get there?

Mark Malinowski: Certainly, you have the conditions of the end state. And, if I can’t see the runway, I better make sure my instrumentation knows where it is. That kind of stuff. So, if I have a particular element that I’m going to have to drive a design with, I’m going to have some attribute of bandwidth, or power, or latency, or CPU utilization, or some aspect that’s going to be a refined, such as, “Don’t crash the plane or the car.” Right?

Nick Finberg: So you are ensuring upfront that you have a somewhat reasonable path to get to a verified state, that you’re verifying at the end. Using each addition of technology, or maybe adding a new component to the board, you’re rerouting a trace on the board, switching to a new package? Are those initiating verification points within the entire development process or are doing that continuously?

Matt Bromley: That would be a good end state. I think maybe it’s worth cycling back. Clearly, it’s a good end-state, Nick. Every time you make a change, you need to verify that change against a set of requirements – a continuous verification goal. And there’s plenty of examples of how that works in software. There are many challenges along the way. The architectural decomposition from a system into multi-domain electronics hasn’t been well solved in the electronics domain today. So, you still see that most of the simple architectural breakdown and a digital thread of that architectural breakdown is broken. Consequently, you get a lot of PowerPoint and Visio in the middle of that process, and this breaks the digital thread, creates misinterpretation, and prevents having architectural continuity. Because what you’re really doing is taking this system-level breakdown that somebody came up with, passing it across to a hardware architect, then putting their interpretation on it, and then re-entering the information they gave in the system capture. So, in electronics, that would be schematic capture. But there is no electronic digital thread that’s even linking that architectural decomposition process. And we see that across the industry today where we don’t see that piece of the problem being well solved. So, this is prerequisite number one; and we think we can solve that and help the industry solve it. But that’s the first step in where we want to go.

Tim Kinman: And from that standpoint, it’s not just about having traceability of the requirements to engineering, but it’s about traceability of the analysis of impact regardless of where the change is occurring. Whether I have a requirement change, a system change, or interface change; I need to be able to have that traceability all the way down to the board level to understand the impact.

Matt Bromley: Yes, that’s right. And both ways. One is, if the requirement changes, and what implemented that requirement so I can deal with that change impact. And the other is I had to change this component out because it went end-of-life. This would be a supply chain issue, that’s very common in today’s supply chain challenges. You can’t get the silicon that you need, so you change it out for something else. Then, what requirement do I need to re-verify that against? Because that may have a slightly different spec. So, even that bi-directional connection of requirements, so you can do change analysis, is critical.

Tim Kinman: Well, you mentioned supply chain, and this is not only having a huge impact on the OEMs or the engineering partners, but in the manufacturing supply chain as well. Imagine if you’ve missed a requirement, or if you failed on integration spent a lot of money in the manufacturing process only to find out that it doesn’t work. So, is the supply chain changing the way they work, too? How are they evolving? As more and more of this complexity increases, how’s the supply chain evolving? In general, I think our expectations are that everybody is being forced to change. And it’s just a matter of what degree of change is being forced upon you. Therefore, some of the traditional companies that had long lead times to do fabrication are under more pressure to ensure what they build. This is shown in today’s market of limitations on silicone for chip manufacturing and shortages of chip, whether it’s in automotive or everywhere. So, it is becoming a scarce commodity. Subsequently, people don’t want to have situations where they have failure late in the manufacturing process.

Matt Bromley: If you just take the example you went through, Tim, about the silicon supply chain of chips. I mean, there’s plenty of news certainly in the automotive industry, like Toyota cut production by some percentage that’s equivalent to several billion dollars of market opportunity. So, bringing the supply chain resilience into the design process is going to be critical moving forward. There is no point designing if you can’t manufacture. Having visibility into that supply chain is going to be critical as well. And, the future availability of silicon that is being manufactured is one aspect of that. The other, of course, is where is that supply chain being consumed? And are you going to be able to get access to in the elements of the supply chain that are required to meet your manufacturing demand? So, I think it’s related in terms of being able to verify an overall architecture and then take that down into implementation. Therefore, what we’re going to see is a requirement to have more visibility into the supply chain and design for the supply chain resilience moving forward.

Mark Malinowski: And maybe a more indirect answer to your question, Tim, is this effect of “90 percent yield, 50 percent success once it gets to the customer.” That, oftentimes, is ambiguity in the requirements or specifications. So, the supplier manufacturer can demonstrate with what they’re shipping to their customer that meets the specification but doesn’t work in their system. So, what you see is the indirect effect of that is the customer and end-user is putting more pressure on the supplier to say, “I need more insight into how you’re refining your requirements and your verification of them, so you can help me help us both to close this gap so that 90 percent yield translates much closer to 90 percent success rate.”

Tim Kinman: And I would expect in that supply chain, if they’re not adapting these model-based principles and working their way, either the main product manufacturer is going to bring that in-house, saying, “Okay, I can’t afford to have errors late in the game. I’m either going to do it myself with some custom ship type of technology and development, or I’m going to change suppliers.”

Mark Malinowski: Yes, and I think we see that exact behavior coming with some of the more thought leaders at the supplier level; and level two, and level three suppliers, for example, like the DOD ecosystem. The DOD and the branches are demanding more system models be delivered earlier in the design cycle so they can try to attack this problem. And the suppliers are saying “these models that they’re asking for aren’t going to help us close that gap.” So, we see industry commonization on the customers’ way to express system models and consume refinements of that system model to embrace the needs of the supplier and their design flows, especially electronics design and manufacturing flows, so that those seams in between the two are closed.

Nick Finberg: Well, that sounds like a very good vision for the future of electronics and MBSE. Before we wrap up, do you guys have any other things that you’re hoping for in the next five to 10 years? What do you see is plotting the course for EDA with MBSE?

Mark Malinowski: One of the areas we’re focusing on is this idea of how do we hybridize a digital twin? Digital twins just don’t magically show up. They’re developed in parallel while the subsystem where the system is being developed. And we need to shift left and need visibility sooner that we’re building the right thing across the supplier boundary. We do that by looking at various techniques of modeling and simulation that may not be accurate, but they’re close enough to tell us that we’re in the right range of performance. So, in many cases, 10 percent in accuracy might be a showstopper. But when you’re early in your estimation of one architecture trade-off against another, a 10 percent inaccuracy is still good enough to eliminate architectures that are going to be too high risk of either succeeding initially or not being able to sustain any kind of growth in capability through the deployment cycle.

Nick Finberg: Because even 50 percent accuracy is better than zero if you didn’t do anything.

Mark Malinowski: No visibility and guesswork, correct.

Matt Bromley: We talked about complexity at the start. And even though we, sort of, are here in the pure IC space, Moore’s Law may be starting to become a limitation. The complexity of electronics continues to grow at the rate it’s grown. We see that a significant emphasis on packaging and 3D IC is a potential way of introducing more capability onto a single package. And we don’t see any slowdown in the overall complexity, increase in electronics, where it’s being used, and what the requirements are around electronics that are going to continue to drive that complexity. So, we’re witnessing this complexity curve continue, as our customers are looking to help manage these complexities. So, this is a problem that we’re going to focus on and help our customers solve. It is certainly starting to become at the forefront of much thought that customers are bringing to us.

Tim Kinman: So, although you started the call with a little bit of a history lesson there, Matt, I think it’s almost like “Hang on, because you ain’t seen nothing yet.” It’s going to go faster. Right?

Matt Bromley: Exactly. I mean, if you look at that complexity curve, all the history, it’s continued to accelerate, and I see that that’s going to continue moving forward. Although I imagine your gas pump is going to stay pretty much the same.

Nick Finberg: All right, thank you both. It’s been an awesome conversation. Thank you.

Matt Bromley: Thanks, Nick.

Tim Kinman: Fantastic. Thanks all.

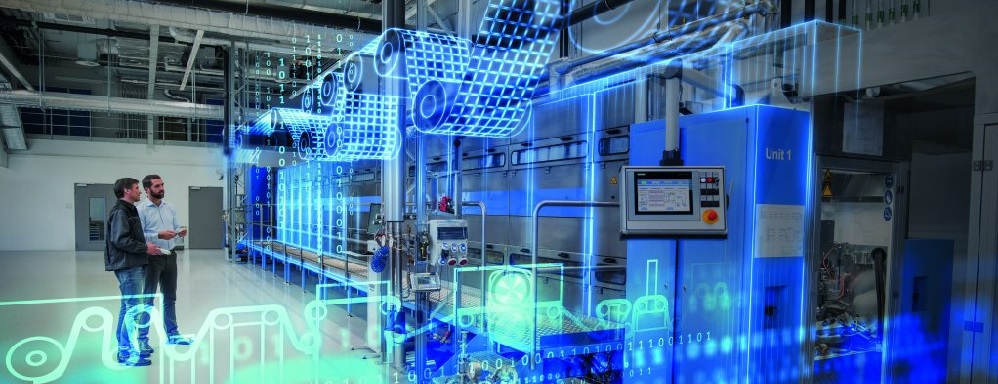

Siemens Digital Industries Software is driving transformation to enable a digital enterprise where engineering, manufacturing and electronics design meet tomorrow.

Xcelerator, the comprehensive and integrated portfolio of software and services from Siemens Digital Industries Software, helps companies of all sizes create and leverage a comprehensive digital twin that provides organizations with new insights, opportunities and levels of automation to drive innovation.

For more information on Siemens Digital Industries Software products and services, visit siemens.com/software or follow us on LinkedIn, Twitter, Facebook and Instagram. Siemens Digital Industries Software – Where today meets tomorrow