All Detailed Thermal IC Package Models are Wrong… Probably

‘Rubbish In -> Rubbish Out’ is a well accepted fact in the world of simulation. Regardless of the technical capabilities of your thermal simulation tool, the accuracy of prediction will always be tightly coupled to the accuracy of the input data. The most accurate thermal IC package model is a so called ‘detailed’ model where all the internal construction is represented explicitly in terms of the geometric sizes and material properties. Certain parts of the package construction are easily measurable and well characterised. Other parts much less so, especially when it comes to highly resistive thermal interface layers, the misfortune being that such layers offer the largest thermal bottleneck to the heat flow in the package and thus most sensitise the resulting operating junction temperature (and its prediction).

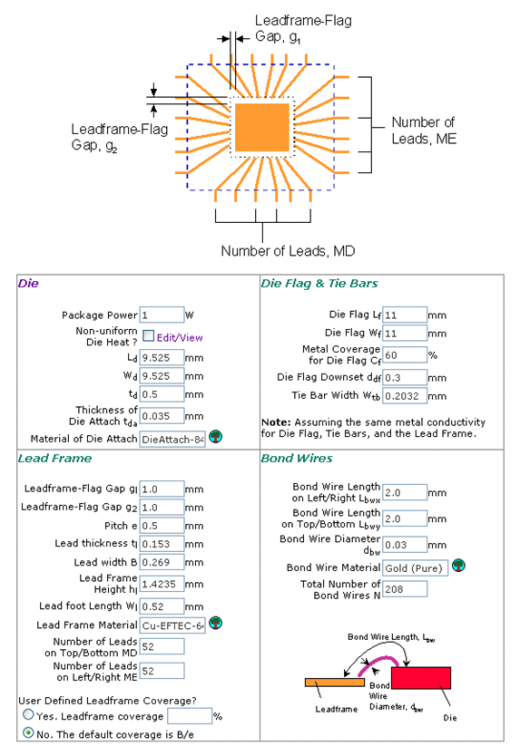

Tools such as FloTHERM PACK automate thermal IC package model creation, a pre-processor for FloTHERM and FloTHERM PCB. The parametric definition of such models requires such things as die attach bond line thickness (BLT) and material properties to defined, for spreaders knowledge of the density and specific heat of the metal is a pre-requisite for subsequent transient simulation studies. The question then is to what level of accuracy is that data know? Manufacturing quality, or quality assurance of the manufacturing process, will assure knowledge of say a TIM BLTs (assuming no die attach voiding), but what about its thermal conductivity? What about determining the specific heat of a novel plastic encapsulant material?

Tools such as FloTHERM PACK automate thermal IC package model creation, a pre-processor for FloTHERM and FloTHERM PCB. The parametric definition of such models requires such things as die attach bond line thickness (BLT) and material properties to defined, for spreaders knowledge of the density and specific heat of the metal is a pre-requisite for subsequent transient simulation studies. The question then is to what level of accuracy is that data know? Manufacturing quality, or quality assurance of the manufacturing process, will assure knowledge of say a TIM BLTs (assuming no die attach voiding), but what about its thermal conductivity? What about determining the specific heat of a novel plastic encapsulant material?

Short of destructing a physical sample of the package, studying it with a SEM and characterising the material properties of its constituent parts (if you have enough volume of them to achieve even that!) what choices do you have?

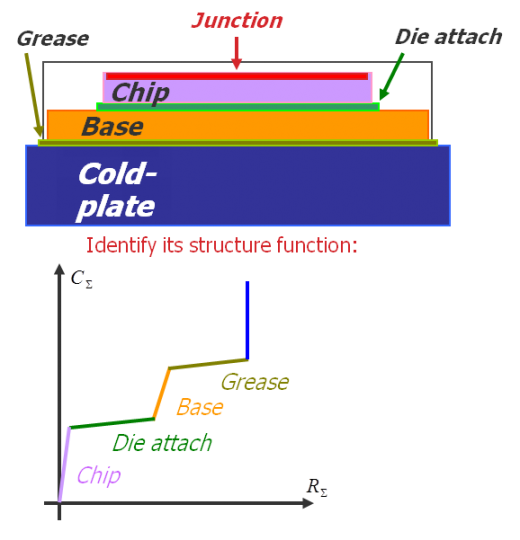

Structure functions of an IC package, showing the thermal resistances and thermal capacitances inside of the package, can be derived from experimental measurements using our T3Ster system, simply by changing the power dissipation of a package and measuring the resulting change of junction temperature in time. Think of it as a thermal CAT-scan.

Structure functions of an IC package, showing the thermal resistances and thermal capacitances inside of the package, can be derived from experimental measurements using our T3Ster system, simply by changing the power dissipation of a package and measuring the resulting change of junction temperature in time. Think of it as a thermal CAT-scan.

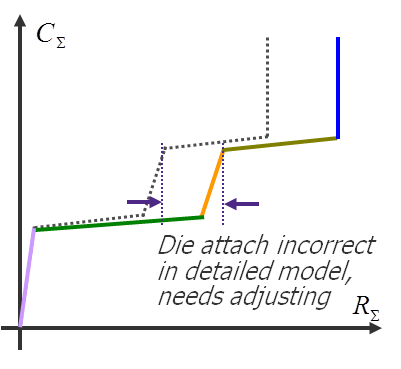

The structure function is shown graphically as a graph of thermal resistance vs. thermal capacitance the heat experiences as it travels from the junction (origin) to the surrounding ambient.

Now consider doing the same thing numerically in FloTHERM. Take your detailed IC package model, simulate it’s thermal response to a change in its power dissipation and derive a numerical structure function. Compare that with the ‘true’ experimentally derived curve and you can identify exactly where the numerical model is erroneous … and by how much.

The process is then one of calibration where the numerical model is adjusted, the structure function re-derived and compared again, iteratively hunting down all the numerical model errors. At the end of that process the numerical model is certified for subsequent steady state and transient simulation purposes with unrivalled accuracy.

The process is then one of calibration where the numerical model is adjusted, the structure function re-derived and compared again, iteratively hunting down all the numerical model errors. At the end of that process the numerical model is certified for subsequent steady state and transient simulation purposes with unrivalled accuracy.

Of course this detailed model calibration process wouldn’t be required if you knew all the input data to an acceptable level of detail. Package suppliers would be much closer to this end point than end users who (in the absence of being provided with a detailed model from their vendor) would have no other course of action than attempt to reverse engineer such a calibrated model themselves. Either way, both sides of the supply chain would benefit from such a calibration methodology.

If this isn’t done there is increased risk of: inaccurate input data to a simulation, inaccurate temperature prediction, bad thermal design decisions and costly business failures. No one wants that.

20th October 2011. Ross-on-Wye