Neural Networks – Artificial Experts or Simple Pattern Matching?

In my last blog, I introduced the some of the technology challenges of realizing machine learning, specifically when it comes to using deep neural networks (NNs) in autonomous vehicles. The hype surrounding this topic is huge, with an almost unfathomable number of papers, startups and university departments focusing on ‘deep learning’ as the solution to whatever application is currently popular. So what is driving this trend towards deep learning?

Research on neural networks started in the 1940s though proceeded slowly for decades. If we go back 20-30 years, neural networks were still very simple tools. Computational power limited the size of the input vector and the number of neurons/layers within the network, while availability of training data limited the number of examples a network could be exposed to. As a result, networks were little more than highly effective, yet highly specific pattern matching tools. However, there has always been a search for ‘generality’ in order to make the NNs more ‘expert’.

This search for generality led to the use of larger training data sets with more variation in example classes, which in turn led to methodologies for deriving machine-useful features in the input data vector (see the use of convolutional layers). Finally, it has resulted in the need for large numbers of neurons/layers in order to map the complex relationship between the large variety of input vectors and their associated class. However, the goal to imbue neural networks with ‘common sense’ remains elusive despite massive investment, including by some of the world’s largest and most powerful tech companies. Indeed many of the same challenges identified decades ago remain more or less unsolved today.

Consider this thought example – take your coffee cup full of your favorite morning brew and place it right at the edge of your desk. Now try to ignore that cup while you work. It’s quite an uncomfortable feeling, right? Likely you recognize that if the coffee cup falls (however unlikely), it may well break and splatter liquid and that will involve effort in order to clean up, especially if your desk sits on the carpet. That is, your common sense tells you that something isn’t right about leaving the cup there. It is extremely difficult if not impossible to ‘teach’ a NN these second- and third-order cost/reward considerations that most of us would call ‘common sense’.

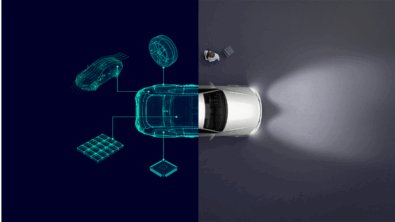

The DRS360 system (see video) under development at Mentor Graphics embraces these current limitations in neural networks into our design concept for object classification. Specifically, we go back to the original and somewhat simple concept underpinning neural networks. In its simplest form, a neural network is simply a connectionist system that derived the optimal numerical model relating a pre-clustered set of input vectors with their appropriate label. Each new and unknown test of the model is essentially testing how well the new candidate fits to the user-defined cluster that the model represents. As such, the simplest ANN can be thought of merely as highly specific yet highly efficient pattern recognition tool.

The DRS360 solution is to design very simple, very shallow classifiers that are highly effective at a specific task. These simple classifiers are organized into a cascading tree architecture, where each node asks how confidently a selected object fits into a specific class (e.g. vehicle, vehicle from rear/front/side, etc.; pedestrian; push-bike; etc.). Different branches represent different types of objects, while different levels in the architecture represent different levels of fidelity. Each node is trained independently, using data that is specific to that task.

Common sense is built into the system through two different steps. Firstly, the driving scenario will dictate which classification algorithms are selected. Only the classification which provides context to the scene at hand will be selected. This will greatly reduce the volume of computation necessary to effectively classify a target. Secondly, the training data is selected to include both explicit labels (e.g. features that are hand selected) and implicit features within the training data that are specific to that training task – for example, selecting only vehicles on the highway for highway classification, or selecting vehicle images/data where the sky, road and background are visible. That is, both the branch and level are chosen according to the driving task. For instance, it will never be necessary to classify vehicles from a side-on view on the highway. Even if one exists it is already classified as a hazard due to it being detected as a ‘stationary’ object by the sensor fusion system. Thus, common sense is built into the training data selection and labelling process, where both explicit labels (e.g. this object is a vehicle, this object is a pedestrian) and implicit labels (e.g. the car is usually on the ground) allows the network have ‘common sense’ without knowing it.

The principal outcome of this work will be presented at the 27th Aachen Colloquium Automobile and Engine Technology in October. Our presentation will outline the process of building a cascaded, multi-level architecture of classifiers and how each classifier/ANN is taught with a focus on expert knowledge. Furthermore, we will outline this system using data collected on the road, with automotive-grade sensors on our autonomous driving test vehicle.

Comments

Leave a Reply

You must be logged in to post a comment.

useful information !! We ove this !!

https://www.automobilexyz.com/

Nice article!! I remembered the Radial basis function network, multilayer training algorithms, etc… AI has a great future ahead !!

This article very helpful for those who learning about

Neural Networks. Thank you

Vehicles for sale