Application Development and Quality Assurance

This is part six of a seven-part series on the growing importance of automotive software. Click here to read part one. You can also download our whitepaper, or visit siemens.com/aes to learn more.

Large-scale trends in the automotive industry are making embedded software development more fundamental to vehicle development overall. Vehicle electrification, connectivity, automation, and shared mobility are driving a need for remarkably sophisticated software to enable features like advanced driver assistance systems (ADAS), battery management, vehicle-to-everything (V2X) communication, and more.

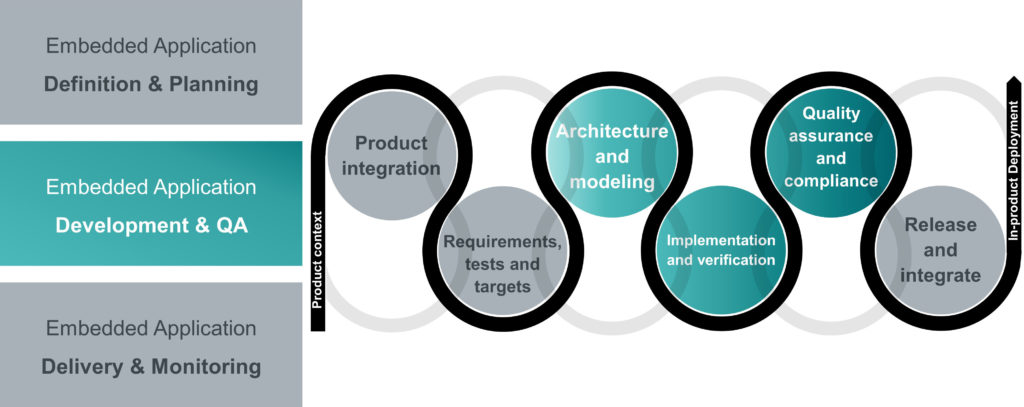

In part six, we will continue in the automotive embedded application development process to look at application development and quality assurance (figure 1).

Software engineers are already deeply involved in the development process as they start to evaluate software architectures and construct software models to prove out the functionality required by the system level definition. Many OEMs and suppliers have adopted a model-driven software development approach for their application development. The architecting and modeling processes are intended to verify and validate the software’s functionality before any code is written or modified.

As models are constructed and evaluated, software engineering teams begin sprints assigning coding tasks to implement the models. It is imperative that software engineers ensure consistent modeling practices across the software-component architecture. Consistent models ensure that teams can perform accurate trade-off analyses to optimize the architecture for the vehicle or platform-level needs.

Process Inconsistencies

OEMs usually use vehicle milestones to track system-level vehicle features, changes, and updates. Meanwhile, software development is accomplished through fast-paced AGILE and hybrid AGILE flows. The discrepancy between these development methodologies can create checkpoint issues that hinder progress.

Additionally, conflicting development methodologies complicate the tracing and visibility of information between teams. During testing, especially hardware-in-the-loop (HiL) or driver-in-the-loop (DiL) testing, it is critical that software teams know what they are testing for, and, more importantly, the reasons for the tests. This includes knowing what changes have been made to which data artifacts, what trigged the change (requirements, specifications, risks mitigations, etc.), which software builds to use, and which hardware abstraction levels are required for specific test methods. In the other direction, the teams responsible for implementation need visibility to testing results so they can make updates and resolve issues uncovered in testing.

Application Development & QA with a Unified Platform for Application Development

Fortunately, solutions are available today that help track and manage the complex and concurrent tasks involved in the application development and quality assurance process. When it comes to task management, these modern solutions can be configured to automatically assign new work items to team members based on a set of conditions specified on a project basis.

These solutions also include iterative planning tools that can provide estimations for task completion based on empirical data. Team leaders or application owners can define the conditions for a completed workflow, e.g. a workflow will only be marked complete if documentation is submitted. Users can also attach supportive documentation, such as component and design requirement specifications, to ensure functional and quality consistency.

Accountability for the performance of development tasks and change implementation is also assured. Teams can ensure that a software release or build has all of the planned updates, with full traceability for each source code modification to the relevant change request.

Quality assurance and compliance certification is typically a continuous process throughout development. Test cases, test plans, and test vectors for both virtual and physical testing are defined while requirements, architectures, and application code are developed and matured. With a unified platform for application development and orchestration, testing is driven directly from the requirements to ensure the quality of delivered application binary and associated files. Requirements-driven testing can also begin earlier in development to prevent costly late-stage defects.

Example: Implementing a System Level Change

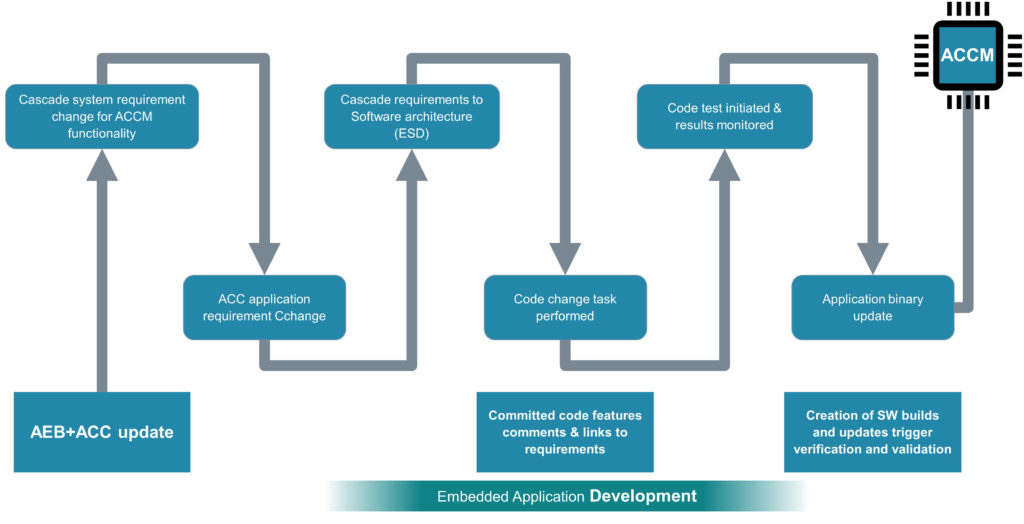

In part five, we introduced the example of implementing a system-level change that combines the automatic emergency braking (AEB) and adaptive cruise control (ACC) features on the adaptive cruise control module (ACCM). The process continues as software architects, developers, and test engineers execute and verify code level changes (figure 2).

The changes made to the software architecture in Embedded Software Designer (ESD), or an equivalent solution, prompt a sprint to change the software code. Progress on the sprint is traced all the way back to the engineering change notice (ECN) in the PLM solution, and to the system level vehicle milestones tracking the readiness of the combined AEB and ACC feature. The software architect can also assign code level changes with C-shell exports describing the new functional implementation.

The software developer uses the updated software architecture to identify code changes that need to be made. Before the developer implements the changes, they can verify them against the system level definition through links between the application development platform and System Modelling Workbench (SMW). This connection enables the software developer to understand the system implication before committing changes to the code.

The software test engineer is prompted to conduct software-in-the-loop (SiL) testing by the application engineering platform once the code changes are committed. The test engineer will be served all the relevant requirements an test cases with this notification. When the SiL test results come back positive, the platform triggers a hardware-in-the-loop test (HiL) on an emulated ECU. The HiL test flags an issue due to a failed input parameter on the CAN signal caused by a delay in the CAN signal timing. The issue is resolved with an update to the CAN signal sampling interval. The application engineering platform tracks the discovery and resolution of this issue to demonstrate the quality of the delivered application.

The embedded application engineering platform supports the use of AGILE processes for updating software code despite the use of milestone-based development at the product level. As developers engage in sprints, the application engineering platform ensures that changes are made in the context of system level constraints. The platform also ensures that the software test engineers know exactly what to test and why they are performing the tests. Facilitating SiL and HiL tests enables the engineers to catch issues early in development, reducing the cost required for resolution.

Conclusion

The software component architecture and modelling tasks verify and validate that component interactions achieve the desired functionality. As models become more robust and complete with verification and validation, code changes and updates can be completed and tested with software-in-the-loop (SiL) testing. Engineers can then perform modeling level updates and test again to ensure consistency, compatibility and overall accountability with needed reports and audit trails.

Working from software models not only speeds up the process, but can instill methods such as SOTIF (Safety Of The Intended Functionality) to ensure that the software is working as intended, and hazards are prevented by-design. Incorporating SOTIF methods complements standard functional safety approaches that mitigate risks by employing safety goals that assume faults will occur. This combination produces exceptionally robust automotive embedded software applications.

A unified software engineering platform ensures data consistency despite constant changes, keeping all the parties in the development process continuously involved in delivering quality software applications that are compatible with the full range of vehicle variability. Such a platform achieves this data coherency through robust integrations with the various tools used in application development, and powerful change management capabilities.

You can continue reading about application development and quality assurance processes in our whitepaper. The final part of our blog series will discuss the delivery, monitoring, and maintenance of embedded applications. To read the previous blog on application definition and planning, click here.

To learn how a unified application development platform can help companies meet functional safety standards, like ISO 26262, please register for our upcoming webinar, Increase automotive functional safety with ISO 26262 compliance.

About the author: Piyush Karkare is the Director of Global Automotive Industry Solutions at Siemens Digital Industries Software. Over a 25 year career, Piyush has a proven history of improving product development & engineering processes in the electrical and in-vehicle software domains. His specialties include integrating processes, methods, and tools as well as mentoring product development teams, determining product strategy, and facilitating innovation.

Comments