Can autonomous vehicles predict the future?

Car manufacturers use technology to drive sales. As proof, try this little experiment. Sit down for a couple of hours in front of your TV watching a channel that has commercials. Take note of each car commercial that features some sort of technology to sell you on the vehicle. It might be some fancy and futuristic feature or a safety solution built with electronics and software.

Many times, this technology feature is a precursor to autonomous driving technology like:

- Automatic parking

- Lane crossing warning

- Collision avoidance

- Automatic breaking

- Adaptable cruise control

- Over-the-air software updates

Almost always, these whiz bang features are labeled “available.” Which is a polite way of saying that you will pay extra for them. This is genius. The car companies catch your attention with a futuristic feature that might compel you to buy the car. Then, they make you pay extra for the feature. All the while, they get to use you and others as a test bed for technology that will be employed in future autonomous vehicles.

It is clear that AI-driven object recognition is the key ingredient for autonomous drive vehicles. These systems learn from a huge database of objects and they connect to mechanical systems in the vehicle in order to correctly react to driving scenarios. What happens if these systems do not recognize the scenario? What if there was a new way to build these types AI systems? Perhaps we should look at how humans deal with driving scenarios.

It is my theory that humans remember unusual situations best. We store them away in case they arise again so that we can react correctly next time we see them. For example, when I was 15 I was on the road with a neighbor that was teaching me to drive. I suddenly slowed down the car when I recognized a scenario from all my years of riding shotgun as a kid. I stopped and my instructor asked what I was doing. I predicted that the car in front of us was going to stop and then back into a parking spot on our right, parallel parking style. That turned out to be exactly what the other driver did. My instructor asked how I knew that was going to happen. I had no answer at the time. But I now believe that I had stored this unusual situation in my memories of the past and pulled it up in a fraction of a second because I saw it as a potential hazard.

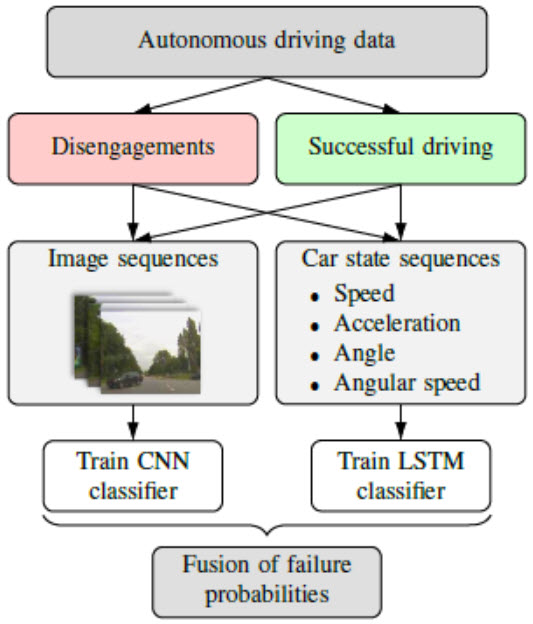

These “bad” driving scenarios are what a research team at Technical University of Munich (partnering with BMW) exploited in their AI system that provides an early warning to hazardous situations. Instead of training a system to recognize every object on the road they concentrated on training their system based on thousands of real-world traffic situations that BMW’s automated cars in the field were unable to handle in the past. They focused on unusual situations like I remembered from the past.

Their system employs cameras and sensors to monitor conditions around the vehicle and it keeps track of visibility, speed, weather conditions, and the current steering wheel position. A recurrent neural network recognizes patterns in the current driving situation that match the bad driving scenarios that it trained on.

BMW states that this system can predict critical driving situations with 85 percent accuracy. But the big story is that these predictions happen up to 7 seconds before they occur. In bad situations, humans often have less than a second to react. 7 seconds is an eternity. This is technology that has the potential to save lives.

Just like humans, if AI systems can store bad driving memories, they can quickly predict what will happen next and direct the vehicle to perform the appropriate action. I like this method much better than a system that just looks for objects and reacts. It is more human.

To get all the details on this new AI idea, search for the journal article entitled “Introspective Failure Prediction for Autonomous Driving Using Late Fusion of State and Camera Information1.”