The rise of AI Factories: accelerating the design and operations of next-gen data centers

AI infrastructure is entering a new era, one where data centers are no longer just storing information, but actively producing intelligence. These data centers, also known as “AI factories,” introduce a new level of complexity, driven by three forces: the need to build faster, operate more efficiently under tight power constraints, and engineer and validate hardware that doesn’t yet exist.

In this blog, we’ll break down the core challenges shaping AI factory development, the emerging metrics that will define success, and why traditional approaches are no longer enough. We’ll also explore how a new generation of tools, like Siemens’ Digital Twin Composer, helps teams design, build, and operate these facilities with greater speed, coordination, and confidence.

Like any factory, AI infrastructure has a fundamental constraint: power. Today, the world cannot generate enough electricity to meet the growing demand for AI. That single fact is reshaping how these facilities are designed, built, and operated, and why a new class of software is urgently needed.

Meet Siemens AI factory experts and authors

A moment unlike any other in data center infrastructure

Data centers are critical infrastructure that drives digitalization across sectors. As operations scale up, traditional setups are reaching their limits. A traditional data center was, at its core, a building full of servers. The new AI factory is simultaneously a small process-industry plant (because of its liquid cooling demands), a real power utility (because of its enormous electricity draw), and an IT environment, three worlds that have historically never had to deeply understand each other, now forced to co-exist and co-optimize in the same building, under the same roof, on the same compressed timeline.

We call them AI factories because these future-oriented data centers are actually manufacturing the building blocks of knowledge, the token.” – John DeBoer, Head of Data Center Vertical, Siemens North America.

There are several players involved in AI factory development. The hyperscalers, Microsoft, Google, Amazon, Meta, are investing heavily to build out AI factories. Neo-cloud companies are racing to build and hand over the keys even faster. Co-location providers, Engineering, Procurement and Construction (EPC) firms, and a sprawling ecosystem of 150+ technology partners are all scrambling to play a role. The demand is extraordinary. And the physics are unlike anything traditional infrastructure teams have faced before.

And then there is a power problem. It is not a distant concern, it is the central constraint of the entire industry today. The US, and much of the world, simply cannot generate enough electricity to power all the AI factories that companies want to build at the scale they want to build them. That scarcity makes every design decision consequential. Wasted power in one part of the system is not just inefficiency, it is capacity that cannot be recovered.

This is the context in which this article, and the solution it introduces, must be understood. The challenges are real, urgent, and compounding. But so is the progress. This piece explores what designing and operating an AI factory actually demands, who the key players are, and how Siemens’ newly released Digital Twin Composer is helping teams build these factories faster, smarter, and with far greater certainty than was possible before.

The complexity of designing and operating AI factories

As AI infrastructure scales, building what some now call “AI factories” has become one of the most complex engineering challenges in the world. Across conversations with customers, builders, and technology partners, the same three challenges keep emerging. They’re deeply interconnected, and solving one in isolation often makes the others worse.

The first is speed, or “time to token,” how quickly a team can go from concept to a fully operational facility. Every delay means lost output. While reference designs help, real-world constraints like land, building differences, and retrofits make each project unique. What’s needed isn’t just faster design, but an integrated process that moves efficiently from concept through construction to operation.

The second challenge is efficiency, captured by the idea of “tokens per watt.” Power is no longer just a cost, it’s the limiting factor. In many parts of the world, there simply isn’t enough electricity to meet the growing demand for AI compute. That shifts the question from “how do we reduce power costs?” to “how do we maximize output from the power we have?” This requires thinking beyond individual components. Even if each piece of a system is efficient on its own, the overall system can still perform poorly if it isn’t designed holistically.

The third challenge is perhaps the most difficult: planning for hardware that doesn’t exist yet. Unlike most industries, where improvements are incremental, advances in compute can be exponential. A new generation of chips might be 10x, or even 10,000x, more powerful, with completely different power and cooling requirements. At the same time, physical infrastructure takes years to build, while hardware evolves every 12–18 months. This creates a fundamental mismatch. The only viable way to manage it is through simulation, designing systems that can adapt to future, unknown technologies.

As a result, new metrics are emerging. Traditional measures like PUE are no longer sufficient. Instead, the industry is converging on time to token and tokens per watt – benchmarks that directly reflect speed and output efficiency, and will likely define competitive advantage going forward.

Introducing Siemens Digital Twin Composer for AI Factories

Digital Twin Composer is a newly released solution from Siemens that connects design, simulation, real-time data, and AI into one unified environment, creating a continuous digital thread from the earliest design decisions all the way through to day-to-day operations.

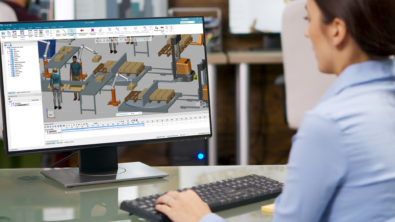

The core idea is deceptively basic but powerful: build twice. First in the virtual world, with advanced simulation, multi-discipline validation, and rapid iteration, then in reality, with the speed, certainty, and insight that only comes from having already stress-tested every major decision in a digital environment. Design problems are solved early, before concrete is poured or infrastructure is purchased. Sizing and layout are optimized against real physics models, not assumptions. And the same digital model used to design the AI factory doesn’t get archived once construction begins, it carries forward into virtual commissioning, so teams can validate the facility’s behavior before a single system goes live.

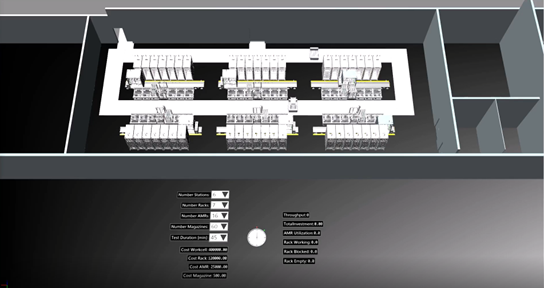

With Digital Twin Composer, explore how you can design and test complex scenarios (thermal, power, layouts, logistics) before build‑out to reduce deployment risk and time‑to‑compute. In operation, the executable twin enables ongoing optimization, predictive analytics, and scenario planning for resilient capacity. These solutions form the foundation of this next-generation experience and compose the industrial metaverse from Siemens digital twin technology.

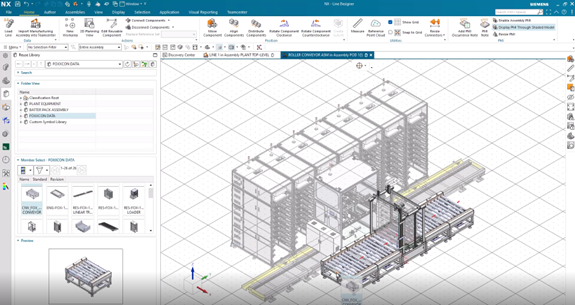

Today’s AI factories are predominantly using solutions like Simcenter StarCCM+, Simcenter Amesim, NX Line Designer and Simcenter HEEDs. But with Digital Twin Composer, it is possible to use all of the solutions listed below, extending your design-to-simulation environment even further and making your data foundation even more robust.

The following solutions are the underlying technologies available in Digital Twin Composer from the Siemens Xcelerator Portfolio, including:

- Tecnomatix Plant Simulation: Perform system-level simulations to validate process robustness while experimenting with various system configurations to optimize performance and equipment utilization.

- Tecnomatix Process Simulate: Perform dynamic simulations that directly connect to automation control equipment (PLCs) to validate both equipment performance and control logic functionality.

- Simcenter HEEDS: Perform AI-driven design-space exploration optimizations.

- Simcenter StarCCM+ and Simcenter Amesim: Perform phsyics-based simulations used to model the cooling (liquid and air)

- NX Line Designer: Develop a complete system design with a Teamcenter-managed Bill of Equipment (BOE) while evaluating layout alternatives to ensure production requirements are met.

- Teamcenter: Achieve seamless data and lifecycle management for the complete factory digital twin.

The Digital Twin Composer leads by going beyond 3D visualization and static models. It composes multiple domains, including product, production, facility, infrastructure and operations, into one executable digital twin that doesn’t just visualize, but drives decisions and connects to live operations. It enables real-time collaboration in system context, supports scenario evaluation for industrial decisions and is built on open, ecosystem-ready principles aligned with Siemens Xcelerator. It combines Siemens’ unique strengths in software, simulation, automation and operational technology to connect virtual models to real industrial outcomes. That continuity, from concept through commissioning through operations, is what makes Digital Twin Composer a genuinely new kind of tool for a genuinely new kind of infrastructure challenge.

Who’s in the room? A complex ecosystem of stakeholders

One of the most striking things about the AI factory build-out is how many different types of organizations and disciplines need to collaborate, often on compressed timelines, often without deep prior experience working together. Building an AI factory isn’t the work of a single organization. Hyperscalers, construction firms, engineers, equipment manufacturers, and property developers all play critical roles. In total, there can be several different players contributing components and expertise. The challenge is that these groups don’t naturally speak the same language. That’s where Digital Twin Composer comes in, by bringing together building infrastructure, production systems, and operational intelligence within a single, connected digital thread. It helps to level set and bring all backgrounds of different expertise into one unified environment.

For example, a power systems engineer, a thermal cooling specialist, and a real estate developer may all be working toward the same goal for designing and building an AI factory, but from entirely different perspectives. This creates a coordination problem as significant as the technical ones. Success doesn’t just depend on better technology, it depends on better integration across disciplines. In the end, building AI factories is a balancing act. Speed, efficiency, and future-readiness must all be optimized at once, while a complex ecosystem of stakeholders works in sync. The companies that figure out how to manage all three won’t just build faster or cheaper, they’ll define the next era of AI infrastructure.

Digital Twin Composer improves cross-functional collaboration in complex collaboration scenarios like this by giving engineering, simulation, automation, operations, and business teams a shared digital twin for decision-making. It helps users understand and act on complex industrial data more effectively. It accelerates digitalization by replacing fragmented reviews and handoffs with model-based workflows. It addresses key customer needs around complexity, speed, transparency, and lifecycle integration.

Why advanced simulation is the only path forward for designing and building AI factories

In mature industries, power plants, process manufacturing, aerospace, companies have 50 years of accumulated simulation expertise. Specialist engineers use specialist tools. The body of knowledge took decades to build.

AI factories don’t have that luxury. The industry is moving too fast. The disciplines involved are too diverse. And crucially, the hardware being designed is still being invented. Tim Schenk, Principal Key Expert, FT RPD Simulation & Digital Twin at Siemens, frames the mandate clearly:

The only way to safely design, engineer, commission, and operate these things is to use simulation and the digital twin – because of two things: you can go very fast with simulations, and the complexity and the multidisciplinary nature of what we’re dealing with demands it.” explains Schenk.

Bridging these worlds requires more than any single simulation tool can provide. It requires a platform that can bring results from multiple disciplines into a single, comprehensible view, one that an engineer can understand even if they’re not an expert in the underlying simulation domain.

The implications extend beyond design. The same technologies used to validate an AI factory design can, on the same path, enable digital twin-driven operations, monitoring, optimization, and adaptation over the facility’s operational life. The design-to-operations journey is one continuous thread.

How do I plan a factory 18 months from now to work with the next generation compute and make sure all the physics are right and that thing behaves properly? But that computer isn’t even fully invented yet today. Well, I have to do it in the digital world. I have to simulate it,” explains DeBoer.

The AI factory represents a genuinely new category of infrastructure, one that combines the demands of industrial process engineering, power utilities, and IT at a pace the industry has never seen. The KPIs are forming. The ecosystem of stakeholders is vast and diverse. And the complexity of multi-physics design makes traditional, siloed engineering approaches untenable.

In our next post, we’ll dive into the technical details: what does the actual workflow look like for an engineer using Digital Twin Composer on an AI factory project? What simulation tools are involved, how do they connect, and what tangible business outcomes can customers realistically expect?

Stay tuned to learn how Digital Twin Composer is accelerating the design and building of data centers – from simulation workflows and underlying technologies to the business outcomes AI factory customers can expect.

![The future of bridging humans, robots, and humanoids with Process Simulate software [VIDEO]](https://blogs.sw.siemens.com/wp-content/uploads/sites/7/2025/11/humanoid-tecnomatix-siemens-process-simulate-human-demo-395x222.png)