Top 10 FloTHERM V10 Features – #7: Super-fast Parallel CFD Solver

I’m sure in the (far) future, product design and manufacture will just involve a big box that you can ask to make things for you. “A leg driven transport device with communication, health monitoring and illumination functions that will last me the week. Please Mr. Box” (or ‘Bob the making box’ as I’d call mine). Out it would pop. Think Star Trek Next Generation Replicator, extrapolated. In the mean time product design is far more manual, time consuming and let’s face it, FUN! Time however is money and no matter how much fun it is, it has to be profitable. Waiting around for your FloTHERM thermal simulation to complete is both not fun and time consuming. Maybe not as much now though…

The CFD solver is the core, the nucleus of a CFD software. Everything upstream of it’s deployment is intended to serve it (boundary conditions, geometry, mesh etc.) and nothing downstream can happen (results inspection) until it’s done its business. Just like a watched kettle, the CFD solver can appear to take a long time to finish. More maybe due to the feeling of helplessness, that all you can do is watch those residuals come down.

I’ve been working in the application and product management side of CFD for all my professional career. I know enough about the maths to be dangerous, not enough to be useful. For FloTHERM V10 we have substantially reworked the CFD solver, especially its parallel performance. Using nothing more than words I’ll attempt to g**k porn a description of what we’ve done…

The main aim of this project was to improve the parallel scalability of the CFD solver. Experience is that for a shared memory approach care has to be taken to achieve +ve scaling above 4 cores. Key to this is focussing on load balancing between the cores. To that end we:

- Modified our data structures to distribute the computational work across the cores more uniformly.

- Modified our linear solvers to take advantage of the better load-balancing including introduction of a new more scalable preconditioner

- Memory management is modified to take advantage of the NUMA-architecture (Non-Uniform Memory Access) of modern processors

- Enhanced memory layout of our data structures to optimize the memory accesses for the modern cache-based processors

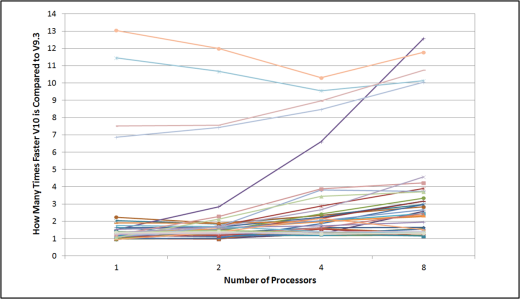

There are many ways to present the performance of parallel CFD solvers. I’ve seen enough graphs showing maximum linear scaling (use N cores results in an N times speed up) to start questioning their integrity long ago. We decided to focus on the improvements a FloTHERM user would expect to see when moving from V9 to V10.The following graph shows how many times faster V10 is compared to V9, for a range of different models, on 1, 2, 4 and 8 cores.

Being the real world, we found that the relative performance improvement is case specific, the improvement does get better the more cores you use but (surprisingly) the performance is not effected by mesh topology (i.e. the number and layout of localized grid spaces). Some of the changes we made for scalability also had a dramatic effect running even on a single core!

We did tests on up to 8 cores on all models, some we tested up to 32 cores, continued +ve scaling was observed.

Being twice as fast, having to wait half the time, is nothing to be sniffed at. 10 times faster is quite remarkable. Less time to read SF and speculate about the future, more time to get back to the real work of designing good (thermally compliant) product.

18th July 2014, Ross-on-Wye