Efficient training of AI Vision for factory automation part 2

This 3-part series will examine some of the challenges of training AI for industrial robotics as well as exploring emerging training methods to address those challenges. In addition, this blog examines the two most popular algorithms used in factory automation. In the first part we looked at some of the challenges with training. In part 3 we’ll examine emerging techniques that achieve the same or superior results with shorter time and fewer resources – both human and capital equipment. To read part one, click here.

Part 2: Major industrial AI algorithms

Endowing robots with human-like motor skills and the ability to perform tasks in a natural way is one of the important goals of robotics, with huge potential to boost industrial automation. A promising way to achieve this is by equipping robots with the ability to learn new skills by themselves, similar to how a human would learn. However, acquiring new motor skills is not a simple task. For robots, the continuous exploration space is massive – a robot can be at any given position at any given time and interact with its environment in infinite ways.

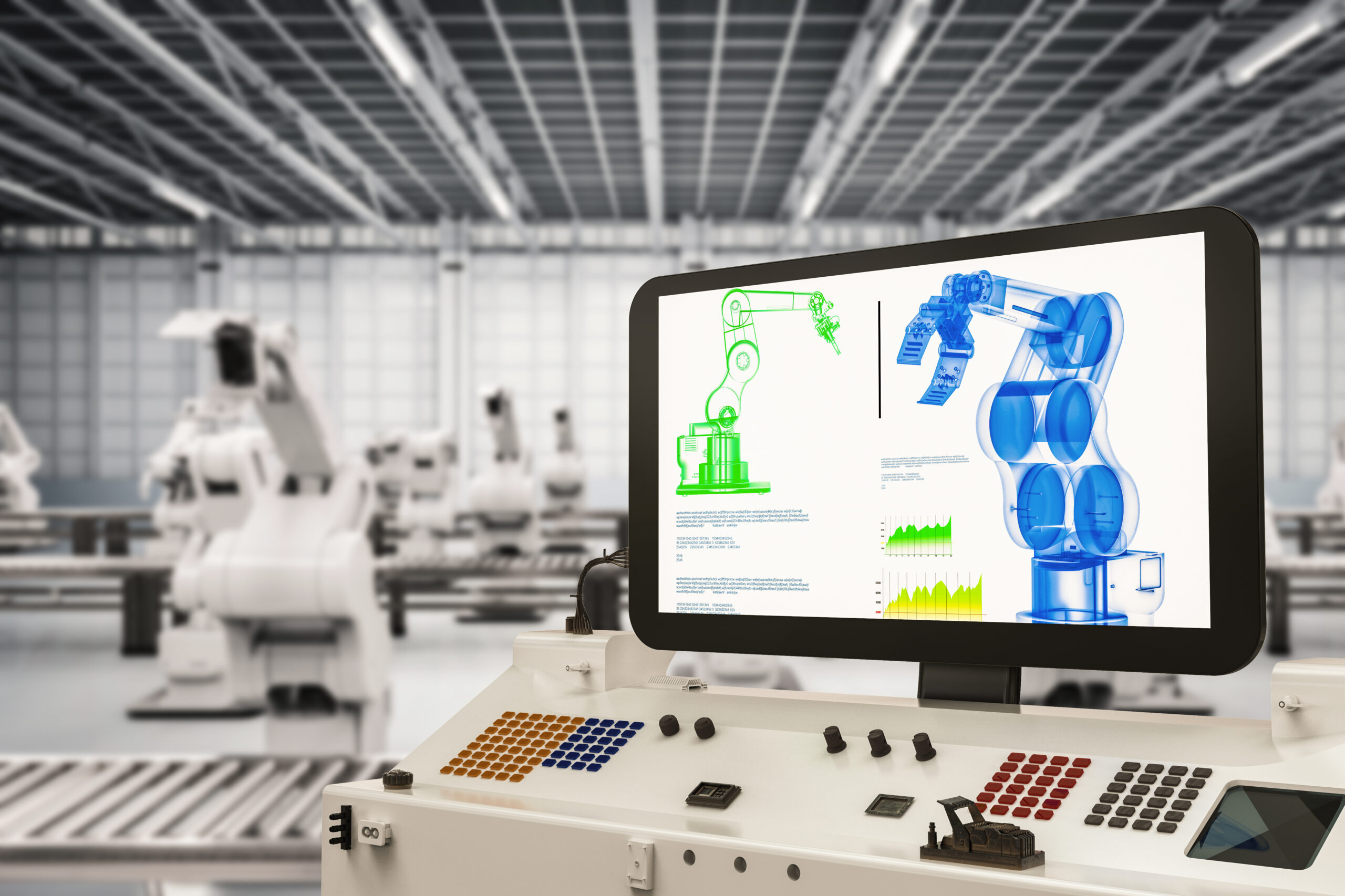

In part 1 of this series, we took a look at the challenges of training Artificial Intelligence (AI) and Machine Learning (ML) algorithms for factory use. Today we’ll be looking at the different types of algorithms that are commonly employed in the factory. The term AI/ML algorithm is a blanket one that covers all the different algorithm types, but in the context of factories, the most popular are Supervised Learning (SL) and Reinforcement Learning (RL). Now let’s take a closer look at them.

First off is supervised learning, an ML algorithm with training data that consists of an input, often images, paired with the correct outputs such as bounding boxes, descriptive text labels, object color and so on. Gathering training data is done using a camera that captures pictures of the parts the robot needs to identify then the algorithm is trained to detect the position, dimensions, or other characteristics of the parts in the images. Next the test cases must be setup manually by placing parts in many different configurations and the images manually annotated before the data can be used for training. This is a time and labor-intensive process that must be repeated over many iterations (hundreds, thousands, or even tens of thousands of times) before the ML algorithm becomes robust enough to deploy to a factory.

Supervised learning works best for well-defined, simple tasks such as detecting objects in images. While SL is widely used today, it falls behind other solutions for more complex tasks or those that require a high degree of adaptability. But not all applications are so simple or well-defined, which is when it’s time to turn to RL.

Compared to SL, RL doesn’t require labeled input/output pairs or manually annotated images, instead RL uses the process of learning from trial-and-error, analogous to the way infants learn. Under the control of an RL algorithm, the robot explores its environment, trying different actions that result in different outcomes. The goal in RL is specified by a reward function that acts as positive reinforcement or negative punishment, depending on the robot’s performance with respect to the desired goal.

Reinforcement learning addresses a wider range of complex problems with less human work in the training process than SL. And in the event of a process change, RL can learn the new process more quickly by building upon existing training to reach a new solution. RL’s adaptability and compatibility with retraining are especially important for visual sensing where the robot must identify any number of arbitrary shapes from a camera image to keep up with iterations in part design or changes in the product the robot is handling. The ability to learn shapes is vital in the advancing field of human-like and self-learning robotics, granting a robot freedom and adaptability akin to human workers in its ability to deal with design changes and unexpected situations.

Over the past few years, RL has become increasingly popular due to its success in addressing challenging sequential decision-making problems. Several of these achievements are due to the combination of RL with deep learning techniques. However, deep RL algorithms often require millions of attempts before learning to solve a task, so applying this approach to training robots in the real world presents several challenges, some shared with SL which also require a vast quantity of data. Firstly, a challenge common to both algorithms, generating such a large volume of sample data is costly and time-consuming. Secondly, it can take months or even years to fully train an RL algorithm while likely causing damage to the robot, equipment, and product in the process. Finally, during the training time expensive robots are also offline so they cannot be used in production.

SL and RL excel in different areas, presenting various benefits for different applications, but both share many of the same challenges in training and implementation. To truly enable the next generation of artificial intelligence to control robots with human-like precision, better training and validation tools are required. In the next part of this series, we’ll look at exactly those emerging tools for better, faster, and smarter robotics.

To read more about SL and RL algorithms, check out these blogs: Part 1, Part 2.

Siemens Digital Industries Software is driving transformation to enable a digital enterprise where engineering, manufacturing and electronics design meet tomorrow. Xcelerator, the comprehensive and integrated portfolio of software and services from Siemens Digital Industries Software, helps companies of all sizes create and leverage a comprehensive digital twin that provides organizations with new insights, opportunities and levels of automation to drive innovation.

For more information on Siemens Digital Industries Software products and services, visit siemens.com/software or follow us on LinkedIn, Twitter, Facebook and Instagram.

Siemens Digital Industries Software – Where today meets tomorrow.