A new artificial intelligence model revealed using MRI technology

Researchers have been probing and scanning the human brain in order to learn how to model artificial intelligence networks for years. The hope is that learning what is going on the in brain will potentially spark an idea so great, that it will lead to intelligent artificial models that think like a human. For the last few years, scientists have turned to a fantastic invention called functional magnetic resonance imaging (fMRI) to study the brain in real-time. This machine measures changes in blood flow that happen when the brain is actively thinking about something.

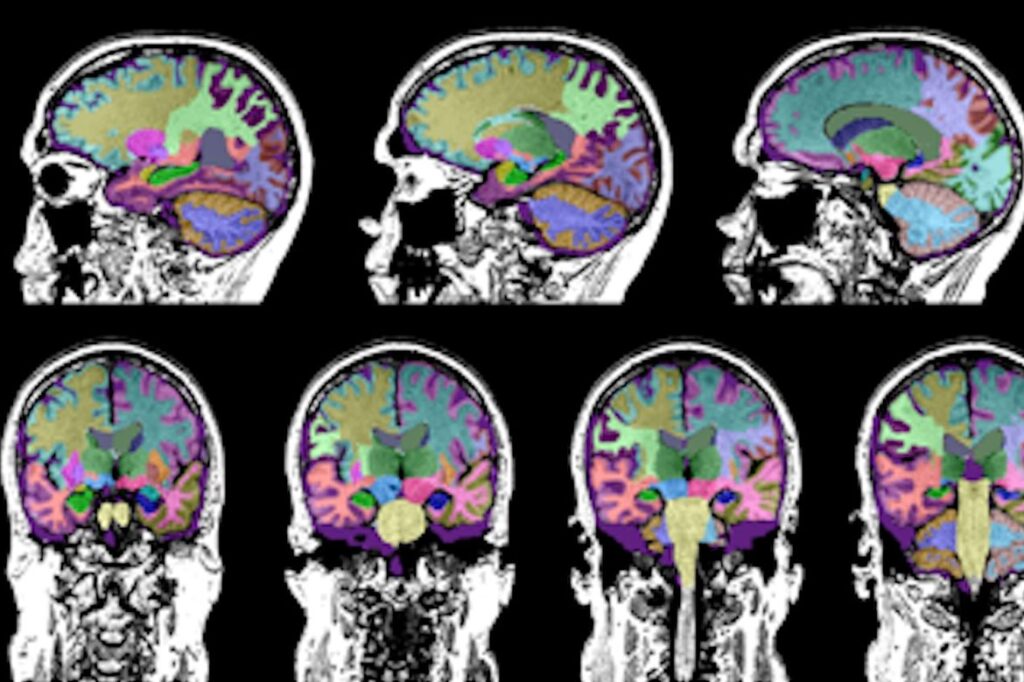

fMRI machines are typically used to research and diagnose brain disorders. But, fMRI technology is also great for understanding what areas of the brain “light up” based on associated stimulus. That is why you see stories about area “XYZ” in the brain is used to detect odors, for example. Unfortunately, it is not that simple, as researchers have found that even the simplest human tasks can stimulate a complex set of neurons that touch multiple areas of the brain. And the role of memory within this process is even less understood.

I came across a paper from a team in Canada that reports on a novel method of using MRI data to construct a brain connectivity patterns in an artificial neural network (ANN). The ANN was trained to perform a task that required memory access. So, instead of using fMRI technology, they relied on MRI technology which has less fidelity. I found this curious because many folks in the AI community seem to think that a new brain scanning technique will be required to achieve the fidelity needed to see actual neural networks.

The team accessed 66 MRI scans from young, healthy adults acquired using a Siemens Healthineers MRI machine at a university. Our colleagues at Siemens Healthineers have several fascinating solutions in this space. The participants were not asked to think about any tasks during the scan. It turns out that the MRI scans had enough information using diffusion-weighted imaging techniques that the team identified over 1000 connection nodes from the scans. They then used a connectome mapping toolkit to duplicate a model of the structural connectivity between regions in the brain. The team then created an algorithm to model the intrinsic network for a memory task. Using these techniques, the goal was to determine how the brain neural network “wiring” enables specific cognitive skills involving memory and to apply that knowledge to their ANN. After running tests and experiments, they determined that their ANN was more flexible and efficient for cognitive memory tasks than other architectures in a benchmark. The link between human brain structures and the ANN was a success.

If you could take a peek at one of my bookshelves, you would see an eclectic mix of books covering neuroscience, artificial intelligence, and science fiction. While I applaud this team’s statement that they have bridged the neuroscience and AI domains with their impressive project, that connection has always been there. The search for how human intelligence works in order to translate that into a computational representation is the foundation of AI. I am not yet convinced that a truly intelligent machine will be based on the human brain though. With no proof, my gut feeling is that some hybrid architecture will be required for that. We shall see.

If you are looking for deep details and the supporting math, search for the team’s paper on this topic called “Learning function from structure in neuromorphic networks.”

At Siemens, we are constantly exploring how to apply AI to our tools and solutions. Listen to my podcasts here to learn more. At the foundation of any AI project, is the neuron. If you want to learn more about how neural networks are modeled, check out my blog here.