Explore the Future of Engineering at the Simcenter Conference 2019

This year’s Simcenter Conference will focus on the “future of engineering,” and how engineers need to move beyond traditional simulation and embrace a future where multi-disciplinary engineering helps to create precise digital twins, that will help to drive the next generation of product innovation.

I attended both last year’s Simcenter Conferences (and before that annual STAR Conferences stretching back 25 years or more). Attending these conferences is always a “reality check” moment for me, and I am always staggered by how the use of simulation tools evolves from conference to conference. However, although technology evolves rapidly, ambition stays the same.

I always make a point of asking Simcenter customers about their “grand challenges,” trying to understand what it is that keeps them up at night thinking: “if I could just _________, that would be a real game changer for my business!“

Regardless of which industry they come from, their answers are often quite similar: mostly they want bigger, more comprehensive simulations that account for more of the product they are trying to simulate.

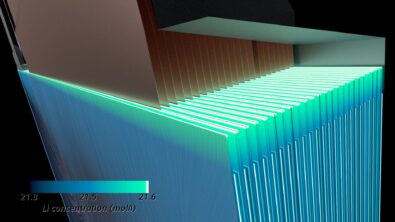

You see, an uncomfortable truth about modern engineering is that there really are no easy problems left to solve. To meet the demands of industry, it’s no longer good enough to do “a bit of CFD” or “some stress analysis.” Complex industrial problems require solutions that span a multitude of physical phenomena, which often can only be solved using simulation techniques that cross several engineering disciplines. Simulations also need to be and validated using experiments test

What our customers are asking for is the ability to “see the big picture.” Simulating whole systems rather than just individual components, taking account of all the factors that are likely to influence the performance of their product in its operational life. In short, to simulate the performance of their design in the context that it will be used. My point here is that our simulation tools, and the infrastructure that surrounds them, are now mature enough that we can begin to see the bigger picture and include more of the physical factors that will influence the real-world performance of a design.

Of course, I do not deny that our modeling ambitions are often constrained by a range of practical considerations. Although the principal concern is one of accuracy, modeling choices are often dictated as much by economic constraints as those of veracity of the prediction alone. Most modern engineers are acutely aware that, to influence the design process, simulation results need to be delivered on time, every-time. With access to limited simulation and computing resources, simulation engineers are often forced to ask, “How much of the problem can I afford to simulate?“.

There are other constraints. Historically engineers have tended to align themselves strictly along disciplinary lines: the fluids guys do CFD, the stress guys do FEA, the chemical guys do all sorts of other stuff that no-one else understands. Getting individual engineers to talk to each other is as often as much of a challenge as interfacing the individual software tools.

It should also go without saying that including complexity for the sake of it is not “good engineering” either. Part of the art of engineering is in deciding exactly how much complexity can be excluded through modeling assumption, without reducing the overall quality of the prediction. In fact, at its purest, engineering might be described as the “art of simplification through modeling approximation,” rendering intractable physics problems into neatly packaged engineering solutions. By making the correct modeling assumptions, you can accurately predict how an abstract design will perform under a range of real-world operating conditions. Make the wrong assumptions and your simulation results will either be a poor representation of the real-life performance of your design, or your model will be so complicated that you won’t get any results at all.

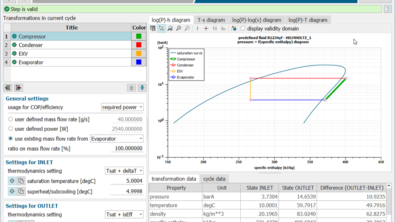

At the Simcenter Conference, we will, with the aid of real-world examples, explore how Simcenter can help you to “see the big picture” by simulating entire systems rather than the individual components. We’ll be talking about co-simulation and the many ways in which you can couple our software with other simulation tools and addressing the question of “modeling fidelity” by demonstrating how certain parts of the problem can be modeled at lower resolutions (in either space or time), without influencing the accuracy of the overall prediction. We’ll also be discussing the economics of simulating large and complex systems and exploring how our licensing models allow you to exploit all your available computing resources in the most cost-effective manner.

For more information on how to register or present at the Simcenter Conference 2019, please visit:

http://www.cvent.com/events/siemens-plm-software-2019-simcenter-conference/